YOU® MLOps Platform

Lets build an MLOPS Platform tailored to YOU!

- Fully customized to your needs

- Integrated with your infrastructure

- ANY cloud / hybrid / on premis

- No vendor lock

- Fast time to market

So you can focus on business and gain a competitive advantage.

Our clients & their benefits

Machine Learning Challenges

We’re stuck at a dead-end with MLOps, we have a team of analysts with low technical skills supplemented by engineers who don't get how ML works.

That is how one of our clients described why they need to build THEI® MLOps Platform with us.

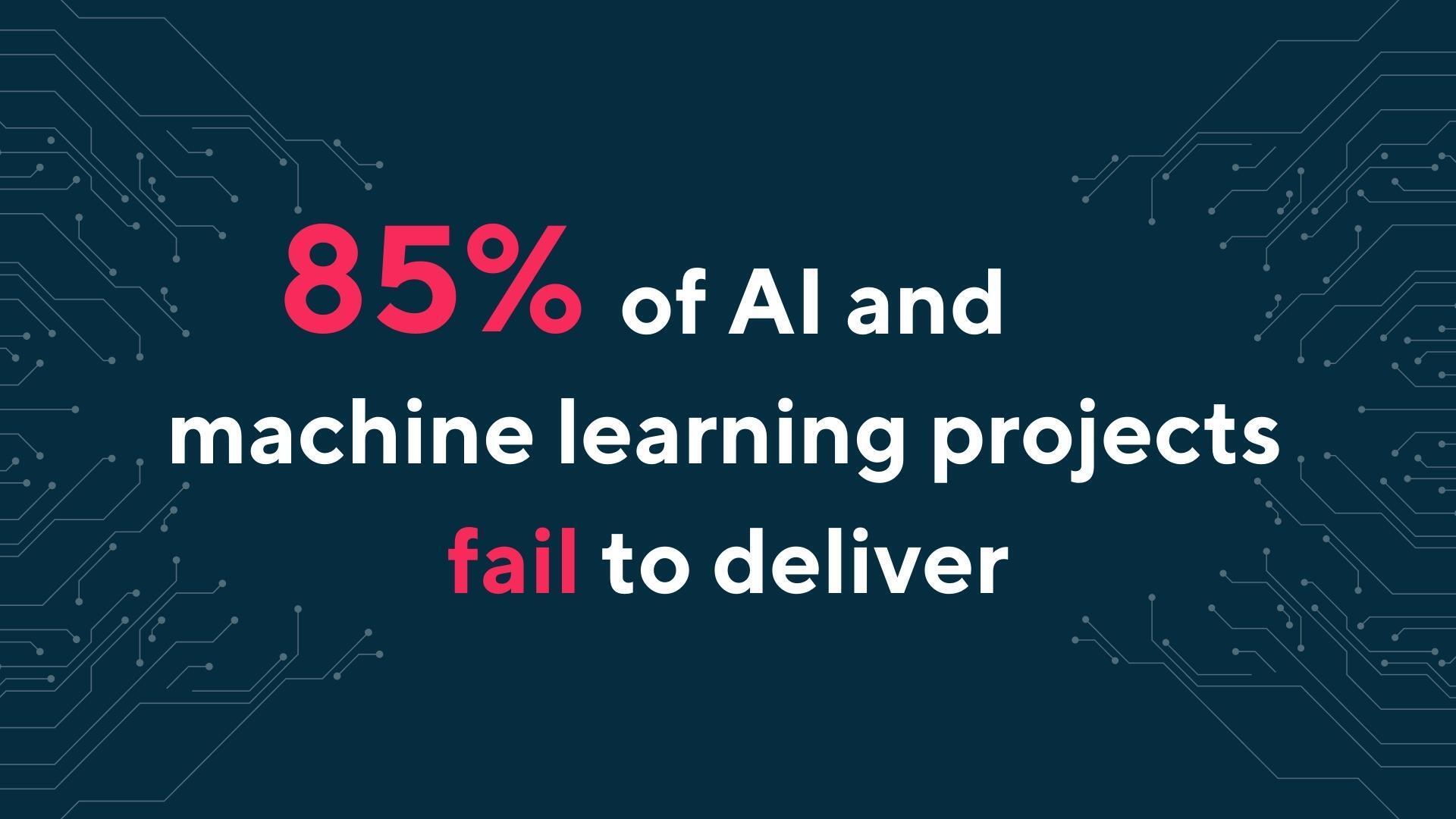

Project fails

High rate of ML project that are not delivered

Time, cost and resource consuming

Even when companies take an ML project to production, it requires a lot of time and resources.

Wasted business potential

According to the Gartner report, by 2025 the 10% of enterprises that establish AI engineering best practices will generate at least three times more value from their AI efforts than the 90% of enterprises that do not.

Need help in dealing with these challenges?

See how our YOUⓇ MLOps Platform solves them!

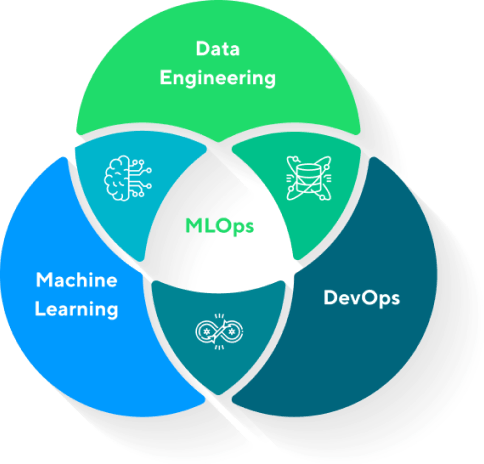

What is Machine Learning Operations (MLOps)?

The MLOps role is to diagnose the use of ML on production and optimize the process to maximum efficiency, by improving or creating a new personalized system that works well in real-life scenarios. In this way the company can reach its full potential in the market by being able to make accurate, data-driven decisions and define trends simultaneously.

MLOps is a set of best practices which are the solution to ML challenges. This practice aims to deploy and maintain Machine Learning Models in production reliably and efficiently.

What is the YOU® MLOps Platform?

The MLOPS Platform is a framework to implement the best practices of Machine Learning Operations (MLOps) and make the process of Machine Learning experimentation, model training and model serving efficient, secure and reliable.

It's not a product available from tomorrow that you have to wrestle with and configure to suit you. It's a personalized solution tailored to your need and to your company's existing processes, solutions and technologies. The product is the final result of researching your needs, use cases, technical assessment and joint work of the combined team on the solution, until a fully customized and optimized platform is created that you can handle on your own.

We build a custom best-of-breed solution instead of a one suits all approach and support the top cloud providers (Amazon Web Services, Microsoft Azure and Google Cloud Platform).

“Under the hood, our MLOps platform combines all the disciplines required to take your Machine Learning out of the labs and continuously deploys to production on a large scale.”

How does the YOUⓇ MLOps Platform work?

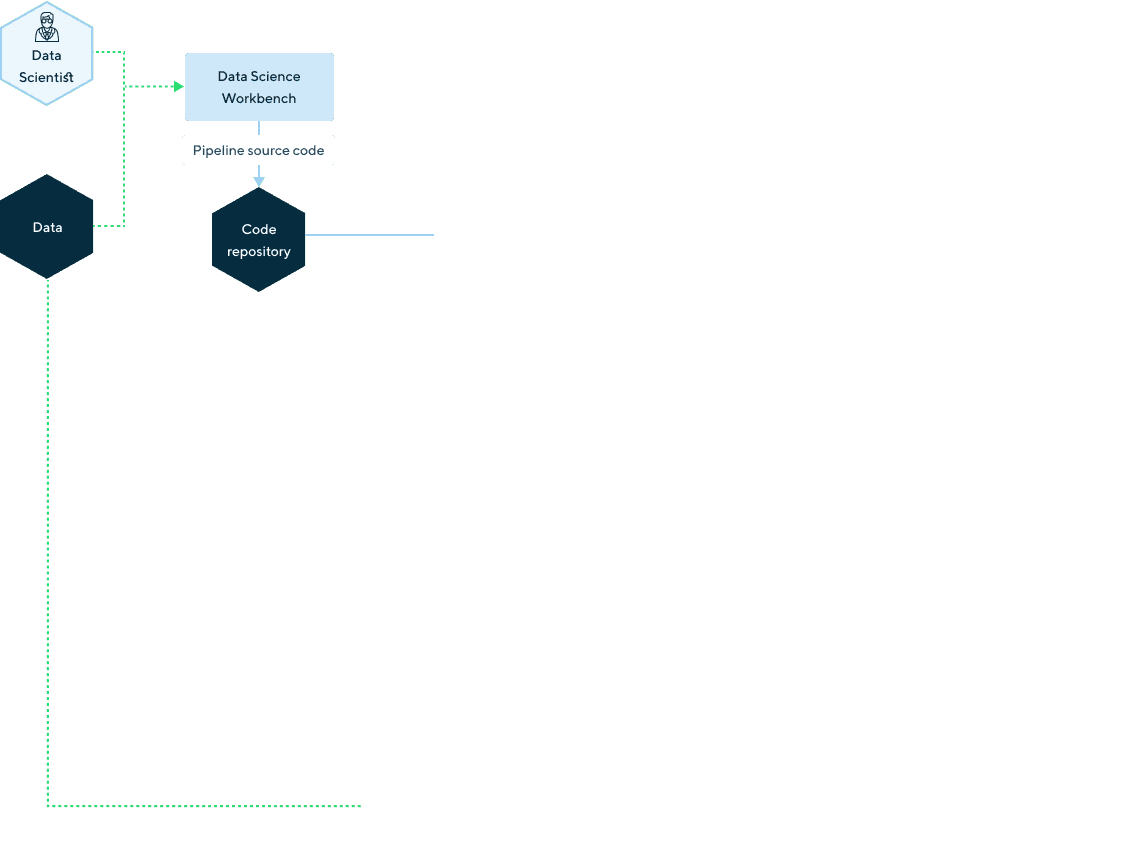

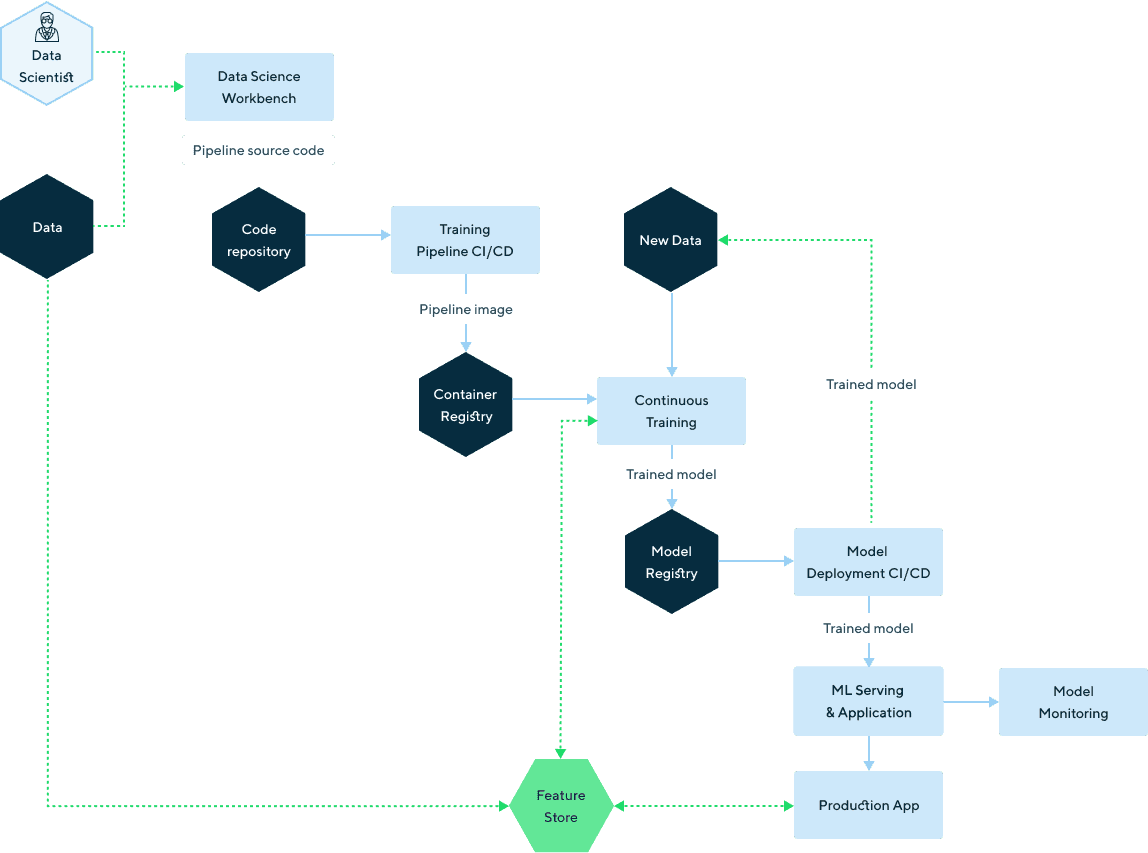

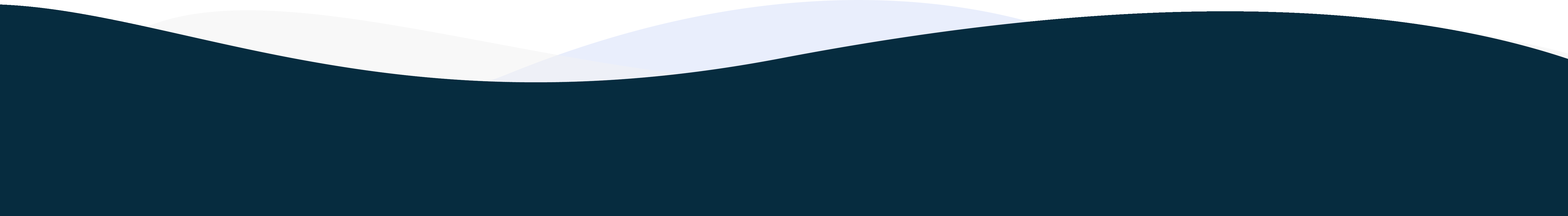

A typical Machine Learning project has 3 phases:

Experimentation - where the data science teams do their research and look for the most suitable method of solving a problem

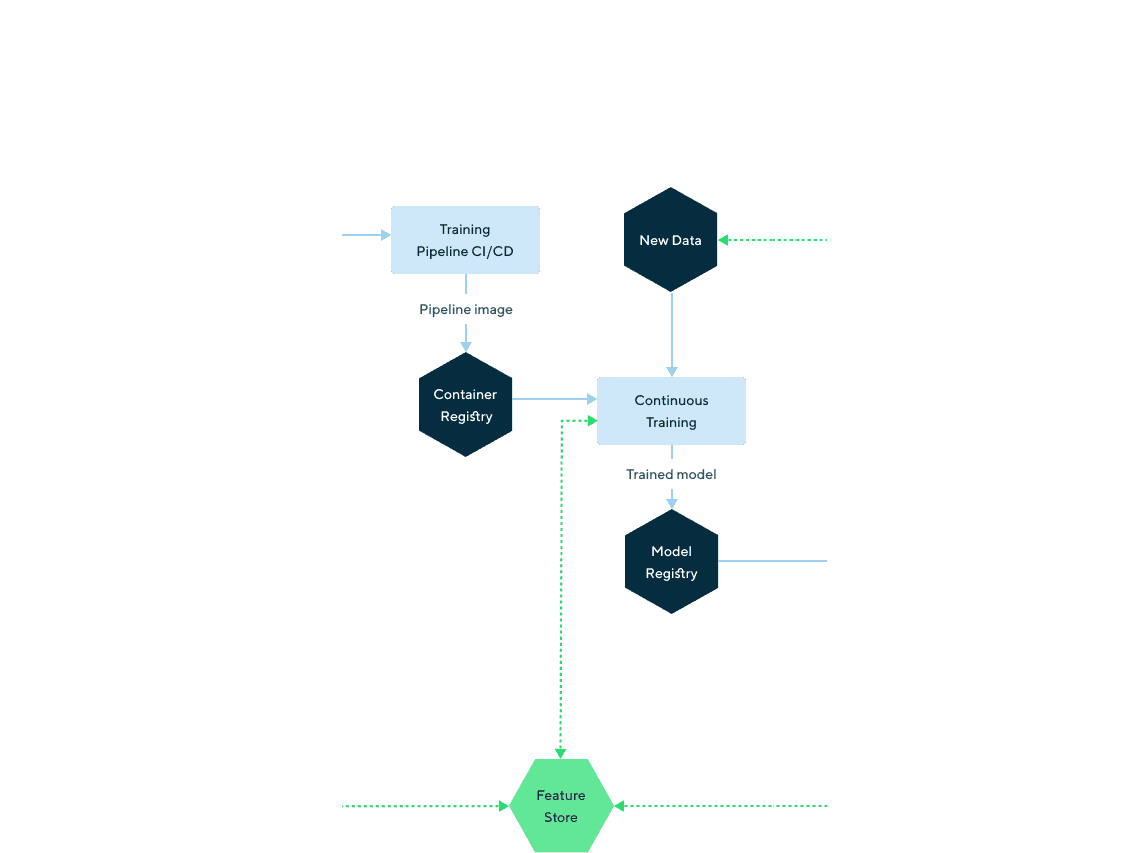

Training - where they structure the model training process into a pipeline

Serving - when the model is ready for production deployment

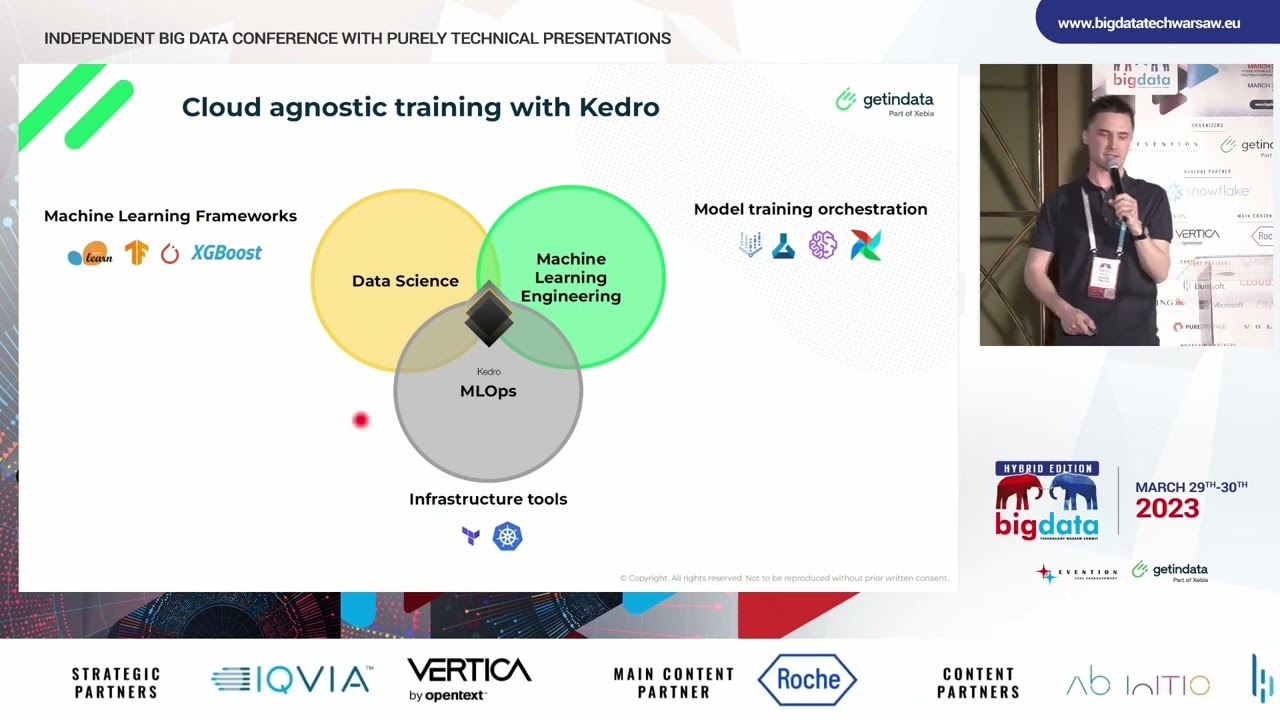

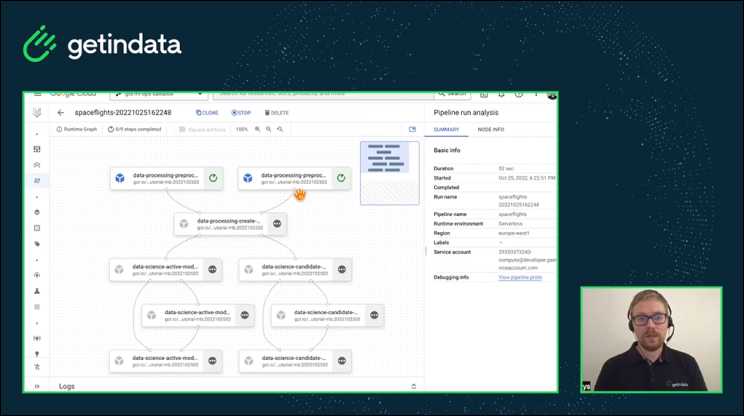

Data Scientists first need to get familiar with the available data, discover potential features and then build the prototype solution. This step is usually unstructured and requires a lot of experimentation. The key feature of the MLOps platform here is to provide the environment for prototyping and exploration, which allows faster development and enables a smooth transition to the production environment (i.e. by using version control, containers and Kedro as an MLOps framework).

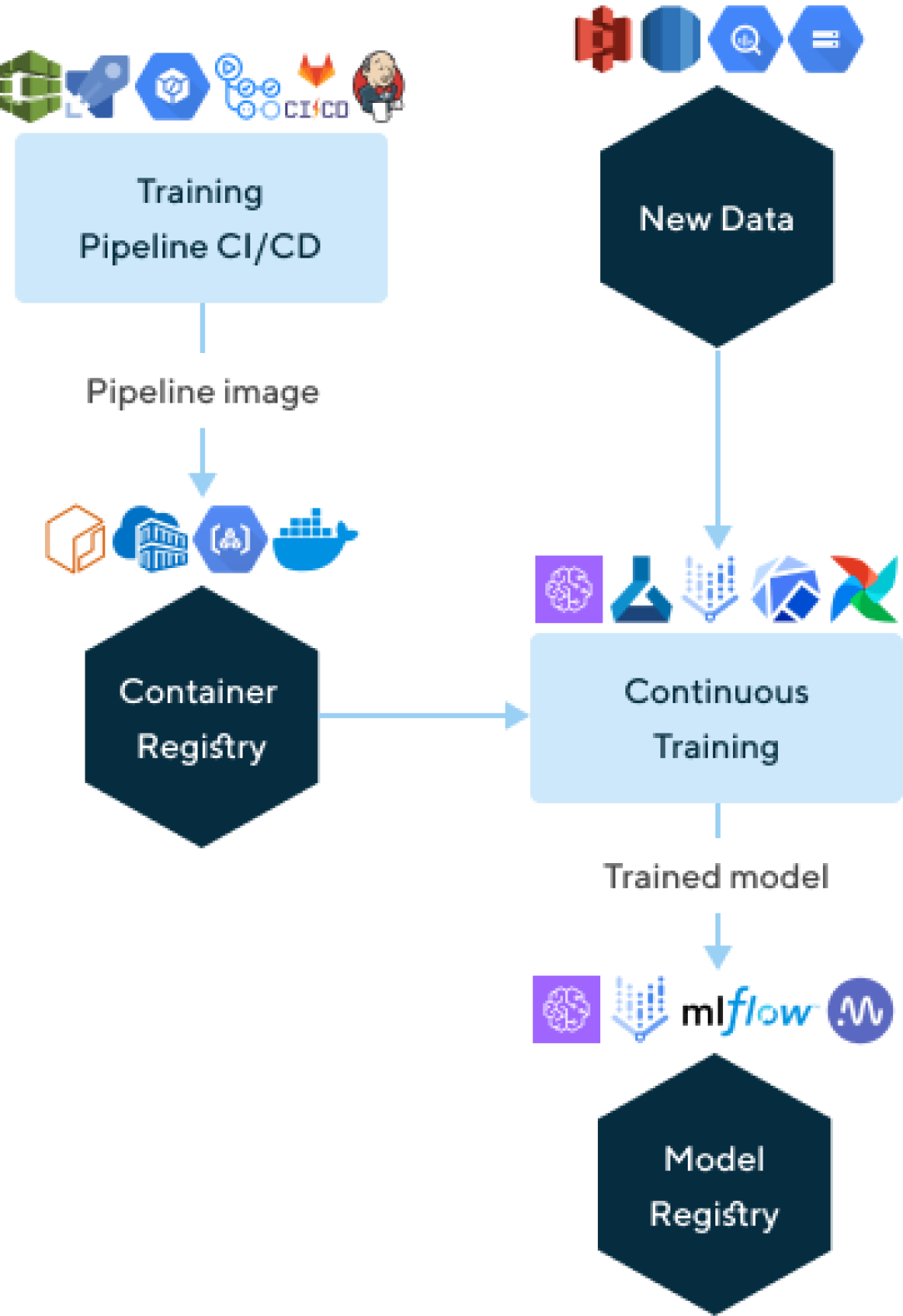

In the Training phase, we build automation for the Continuous Integration/Continuous Deployment process, which outputs the containerized Machine Learning Pipeline. Next, we automate the Continuous Training process to execute the pipeline on the new data when it’s available. Continuous Training produces the Machine Learning model, which we register on the Model Registry, along with the model performance metrics and the other artifacts. This allows us to track model performance over time and decide which one we want to deploy for serving.

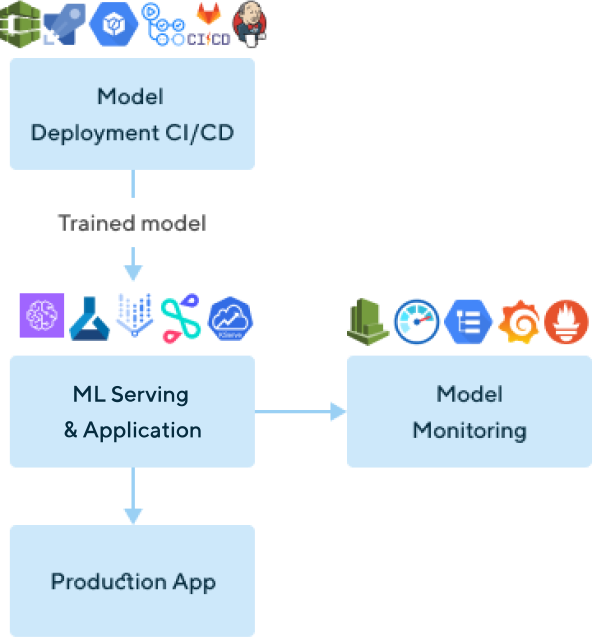

In this step, typically we deploy the model to the production environment in order to be able to use it in the application and serve predictions for the new data either online or offline. Additionally, we set the model monitoring here to measure model performance over time, and trigger model re-training when necessary.

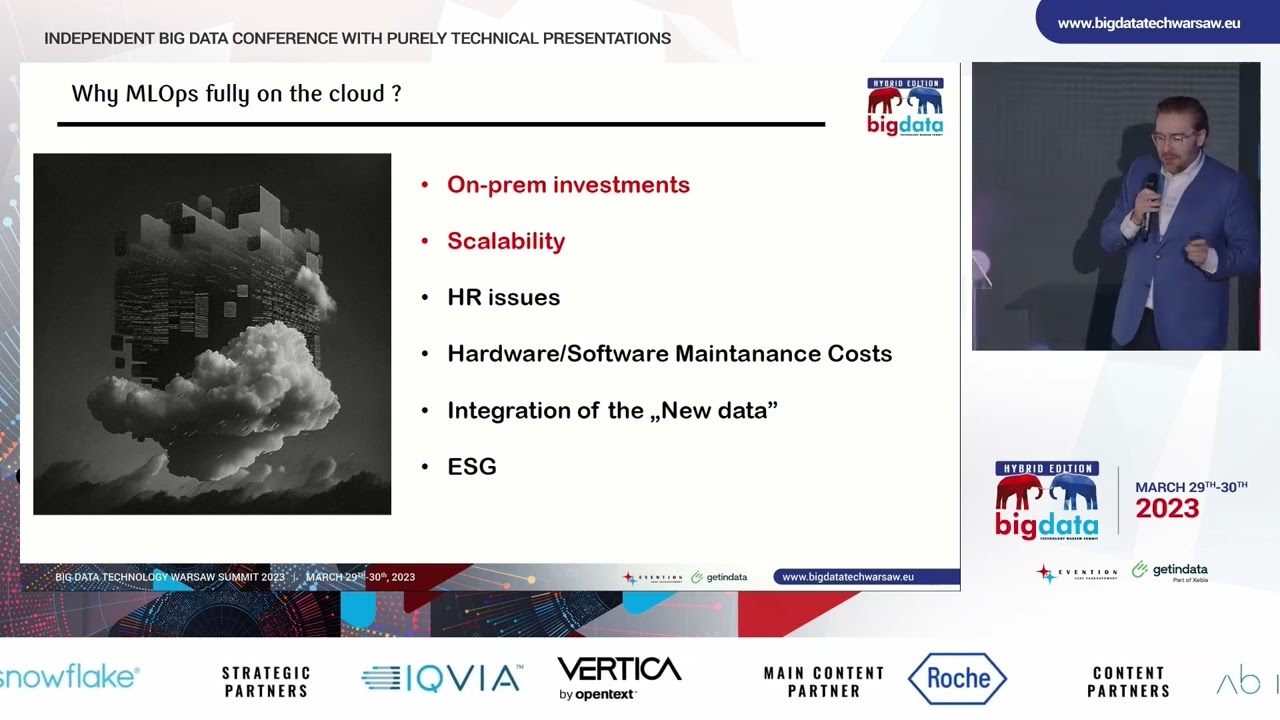

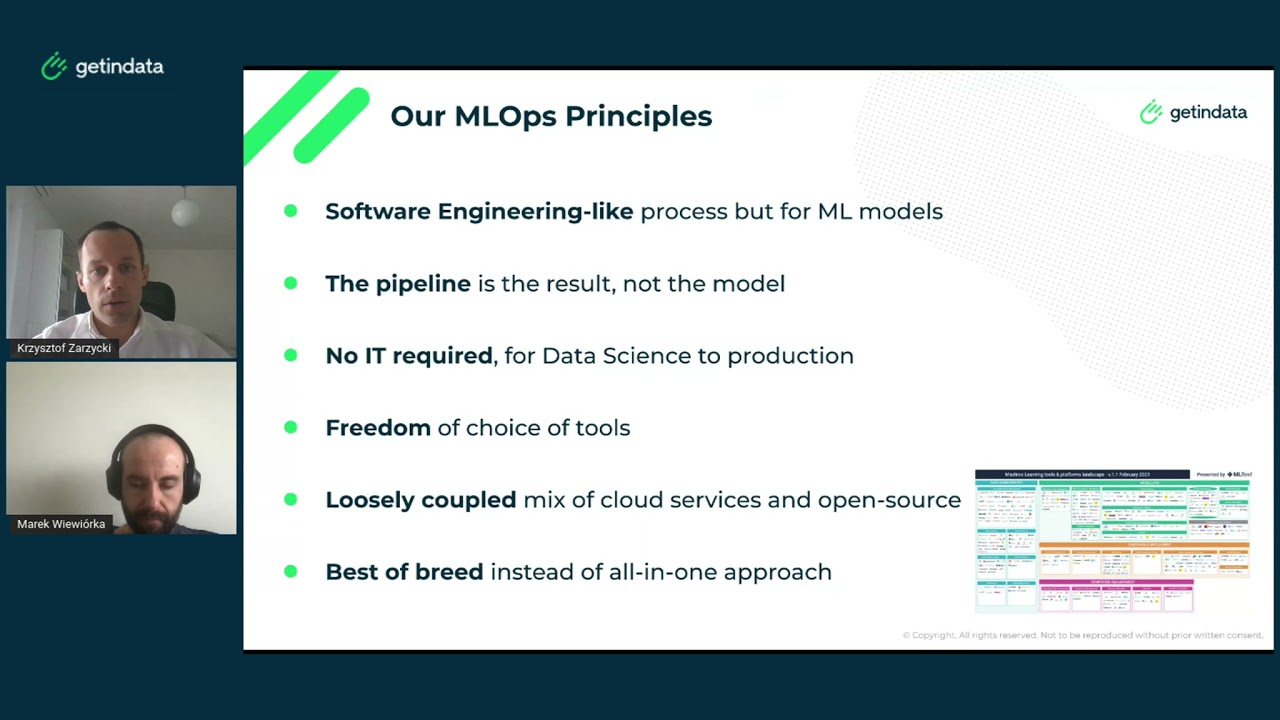

Our MLOps Principles

What makes our approach different?

The YOUⓇ MLOps Platform is based on tools you already know and use, so changes for your team and cost are minimized

As a result, a loosely coupled system of components that can be independently replaced is created.

MLOps Benefits

Democratization of Machine Learning within the company

One of our clients has opened the door to a 5 times bigger group of data analysts

Faster time to market Repeatable

A trustworthy process makes it easy for the data science team to experiment in real-time with their models, which leads to an increase in the scale of AI effort

Flexibility and scalability

You can focus on the value delivered by models rather than debugging code on different platforms

Built-in security

The quality gates allow you to keep control over what gets exposed to the end-users

Cost reduction & cost control

Automation

Benefits for Data Science Teams

- Reliable experiment tracking

- Easy path to the cloud

- Faster time to production

- Model management

*We work with clients all over the world in distributed teams, but if we worked together in one location we could look just like this according to AI (generated by AI based on our images).

How can you build MLOps solutions with us?

We have a unique way of working with clients that allows us to build deep trust-based partnerships. This is based on a few powerful and pragmatic principles, tested and refined over many years of our consulting and project delivery experience. In the MLOps area we work in a combined team (your experts and ours) on a custom best-of-breed solution to implement an MLOps platform based on tools which are already being used and familiar to your company. This is how we minimize input to maximize the output of the process.

As a result we leave you with

A deployed, optimized MLOps Platform that you are able to manage on your own

Full documentation of the solution

Onboarded and trained users (knowledge transfer)

Readiness to get the full potential out of Machine Learning to achieve business values in your market

YOU® MLOps Platform - deployed and functioning independently

This is what implementation of our MLOps solution looks like on a timeline

We scope the proof-of-concept project (POC) with an example use case, gather functional requirements and prepare the environment

- Requirements gathering (workshops)

- Current infrastructure / product / dependencies overview

- Plan the process of building the MLOPS Platform

- Access gathering / sandbox environment setup

- Demo of the MLOPS Platform

- Design the MLOPS Platform architecture for POC

- Project requirements

- Implementation plan

- MLOps Platform demo

- MLOps Platform architecture (POC)

We implement the proof-of-concept (POC) project.

- Start implementation of the POC

- Propose and confirm the technologies to implement the MLOps Platform Components

- Implement the POC project with the client’s checks

- Demo of the POC project

- MLOps Platform architecture (POC) with technologies

- MLOps Platform POC implementation

- Demo of POC + review

We get the client ready to use and maintain THEI® MLOps Platform

- Prepare the documentation along with the final architecture design

- Train / onboard new MLOps Platform users

- Hand-off process

- Documentation

- Onboarding workshops

Need help in dealing with these challenges?

See how our YOUⓇ MLOps Platform solves them!

See our MLOps videos

More about MLOps

Our opensource plugins

We are proud that our experts are the contributors to the most important technologies. We also develop our open source solutions like plugins for Kedro so you could run it... everywhere.

Kedro Kubeflow

Kedro VertexAI

Kedro Airflow k8s

Kedro AzureML

Snowflake

AWS SageMaker

We are proud partners of:

Contact us

Ready to build YOUⓇ MLOps Platform?

Please fill out the form and we will come back to you as soon as possible to schedule a meeting to discuss about your event processing needs.

What did you find most impressive about GetInData?