Analyse your data stream in real time and make the most out of it

A single platform that helps to analyze data streams from various sources in real-time? Thanks to the Complex Event Processing Platform you will be able to collect data from a variety of sources and then instantly deliver Event Processing results to the destination systems.

How does the Complex Event Processing work?

Data Source

Your IT systems exchange vast amount of information, that includes technical messages about opening a form on your website, network traffic information, sensor data, but also more meaningful information like new orders from your customer.

You obviously have access to most of that information in dedicated systems, in a more aggregated manner and on-demand. However, what would you do if you had a chance to combine messages from different systems and react on the spot, just after they were generated? Event processing system are designed to analyse messages in real-time, enrich them with external information, combine into more complex events, analyze for patterns and trigger actions.

Continuous Data Collection

Continuously collect data from various sources like transactional databases, application log files, messaging queues, IoT APIs. Collect them in multiple formats, like CSV, JSON, XML or Avro and via many protocols like HTTP, FTP, NFS or AMQP. Use CDC (Change Data Capture) to receive stream of changes from databases. Prepare stream of events also from batch sources to work on them in a streaming manner.

Analyst workbench

Analyst workbench is the user interface for analyst to create, browse, update or delete configurations executed by the streaming platform. Streaming jobs are configured using Python, Java or SQL - depending on the required level of abstraction and performance goal. It is integrated with CI/CD pipeline, version control and test & review process for smooth deployment process.

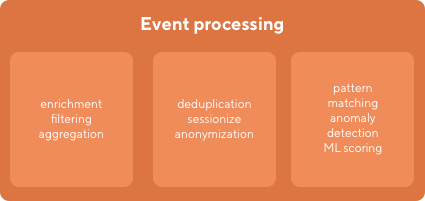

Continuous processing

Event processing is the core of the whole solution. This component can filter single events, cleanse from duplicates, enrich with external sources and sessionize to business events. Then aggregate the events, find complex patterns or detect anomalies. Finally it can score the events with Machine Learning models and when event meets required conditions, trigger appropriate action or alert.

External Data Source

It may happen that you want to use data that is not available in your Data Lake, e.g. for data enrichment. Our design allows you to access data from multiple systems, like external databases, files and data stores, within a single query. You do not need to load data from different sources to use them in your event processing.

ML Models

Including Machine Learning models in your real-time decision making process can be very beneficial. In our design you can enrich your events with an output from external models, like scoring your users in real-time, serving recommendations or perform fraud detection.

Data Lake

Store your structured (like transactions from ecommerce system), semi-structured (e.g. XML or JSON files) and unstructured data (these can be image, but also documents) that is collected and transformed in ESP. Make it accessible for reporting and analytics purposes. The ESP platform archives processed messages in the Data Lake and made it available for further Big Data Analytics.

Continuous Data Delivery

Instantly deliver the results of events processing to the destination systems, in the format and protocol they expect. The events can be published to a message queue, pushed to a HTTP API , inserted in the database. They can also be batched and send as a file to a file broker.

Security

Security and access management tool allows to control user access to data and components of the environment. It provides audit capabilities for verifying who has access to specific resources.

Automation

Deployment automation with proper configuration management are key to ensure the high quality of software delivery and to reduce risk of production deployments. All our code is stored in version control system. We design tests to be a part of the Continuous Integration and Continuous Deployment pipelines.

Monitoring

Complex monitoring and observability solution gives detailed information on the state and performance of the components. You can also deploy metrics to observe application processing behaviour. Monitoring includes also alerting capabilities, needed for reliability and supportability.

Orchestration

Originally all of the components of Hadoop ecosystem were installed with Yarn as an orchestrator to achieve scalability and manage infrastructure resources. Nowadays Kubernetes is becoming a new standard for managing resources in distributed computing environments. We design our applications and workloads to work directly on Kubernetes.

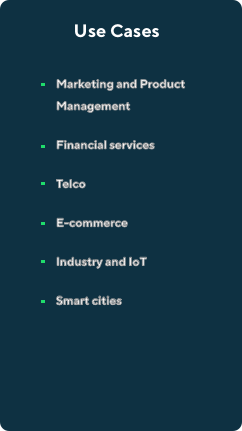

Use Cases

Imagine you stream all the events generated by users (like clickstream) and all the messages being sent by different systems, even internal ones, and all transactional data changes (like from CDC) through one huge pipeline. Imagine that you are able to pick up the ones that has some semantic meaning for you, derive other complex events from them, and take a certain action on that. The actions could be sending a message to the customer, personalizing the website or computing real-time metric that half of the company look at.

How does the Complex Event Processing work?

What is a Complex Event Processing Platform?

It is a platform that allows you to ingest data from various sources (regardless of whether it is IT system messages, Internet of Things Data, Application logs, or other), apply advanced logics (with data enrichment, filtering, sessionization, etc) on the stream of events and deliver the output to external consumers.

How a Complex Event Processing Platform can help your business?

CEP gives organizations many opportunities to manage and use the collected data. Below are some general ideas. Check how Complex Event Processing can benefit your business, from business process managment, through real-time data monitoring and fraud detection to faster adaptation and integrations possibilities.

Faster adaptation

Event processing for business focuses on drawing conclusions from events and enabling instant adaptation to the situation.

Integration possibilities

It is possible to integrate the platform with Machine Learning models, which will further increase the efficiency of data-driven activities.

Business Process Management

event processing flow can combine actions happening in independent systems and take actions based on business logic to automate processes without modifying source systems.

Anomalies and fraud detection

These capabilities also significantly facilitate the discovery of anomalies or frauds, which helps in an almost immediate response to the problem.

Data-driven decisions

Thanks to a Complex Event Processing Platform, you can obtain legible results instantly, which makes taking marketing or sales decisions easier and more data-driven.

Real-time monitoring

Scalability of the platform allows to ingest massive amounts of data and compute business measures and KPIs for online business activities monitoring.

Technologies:

Get Free White Paper

Read more about Complex Event Processing. Find out why analysing events is important for your business, what you can get out of events and how to implement CEP in your organizations.

Use Complex Event processing platform to real-time analysis of your data

How we work with customers?

Our Team of experienced architects, developers and data engineers have already completed a number of projects with real-time event processing. We regularly give presentations on international and local conferences and events.

Your use case

Technical assessment

Solutions proposal

Production-grade solution

Discovery phase

Shared Teams

Extensions

Handover

- Read More

Big Data for Business

If you are interested in how we work with clients, how we develop the project and how we take care of the smallest details, go to the Big Data for Business website.

There you will learn how our Big Data projects can support your business.

- Read More

Knowledge base

We are happy to share with you the knowledge gained through practice when building complex Big Data projects for business. If you want to meet our specialists and listen to how they share their Big Data experiences, visit our knowledge library!

Ready to build Complex Event Processing Platform?

Please fill out the form and we will come back to you as soon as possible to schedule a meeting to discuss about your event processing needs.

What did you find most impressive about GetInData?