Feature Store comparison: 4 Feature Stores - explained and compared

In this blog post, we will simply and clearly demonstrate the difference between 4 popular feature stores: Vertex AI Feature Store, FEAST, AWS…

Read moreCompanies planning to process data in the cloud face the difficulty of choosing the right data warehouse. Choosing the right solution is one of the most important decisions at the early stage of a project, because the project's cost-effectiveness depends on it. Today, I would like to focus on comparing the pricing models of one of the two leading solutions on the market: Snowflake and BigQuery.

Cloud data warehouses like BigQuery and Snowflake have become extremely popular in recent years. Their low cost and fully managed services make it easy for businesses to get started and scale their data analysis efforts as needed. However, the pricing models for these services can be complicated, with a lot of factors affecting cost.

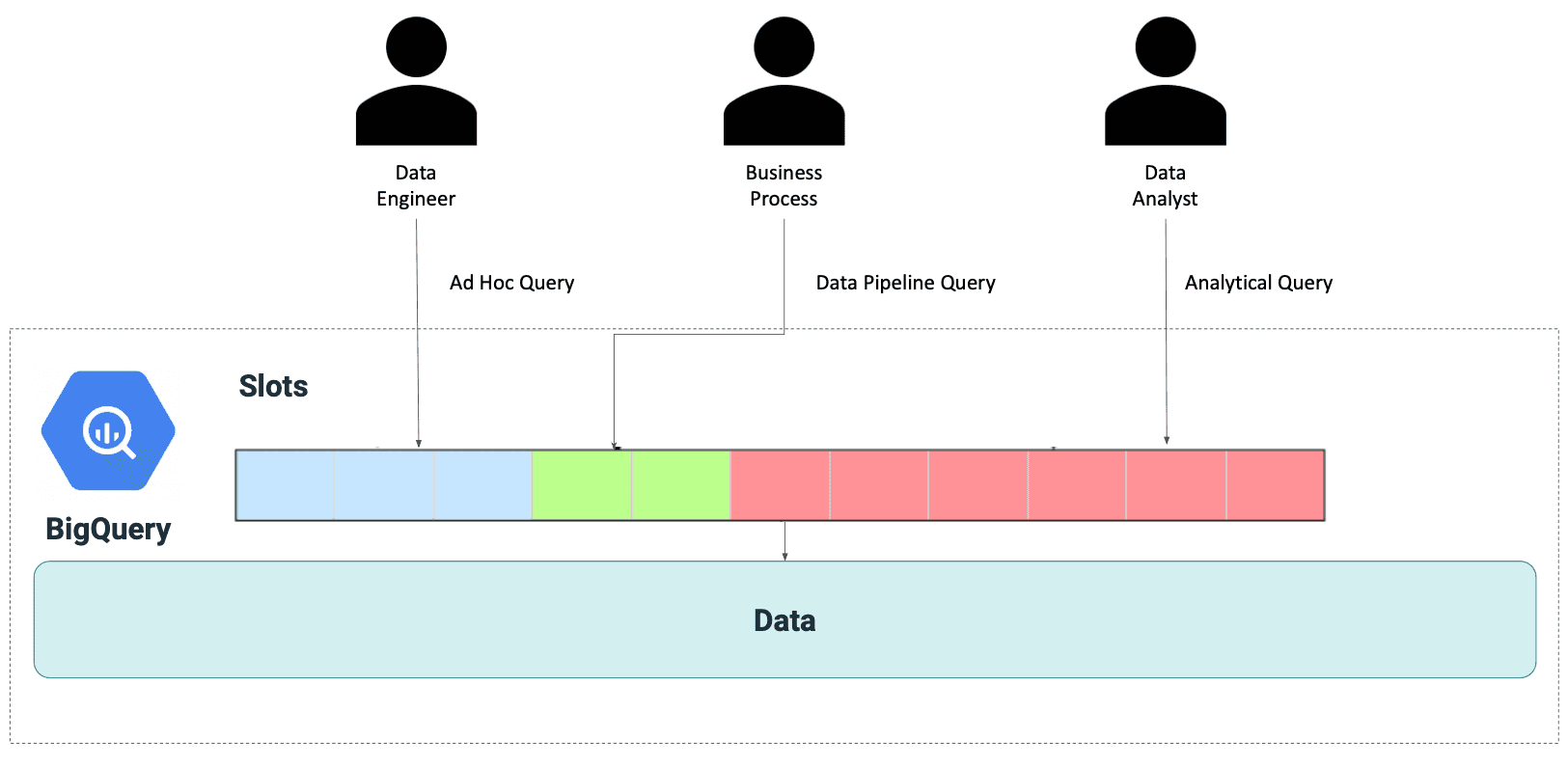

In BigQuery, you pay for each of the TBs of storage and for the computation power depending on which pricing model you've chosen. The computation layer is based on the "slots" model. A slot is a unit of computational capacity that BigQuery uses to process and execute queries. Slots are pooled across all regions, so you can run multiple queries in parallel and increase your utilization. As a result, you can scale up or down without providing any capacity in advance. However, the number of slots you use determines the computation cost of your query, so the number of parallel queries depends on the available slots.

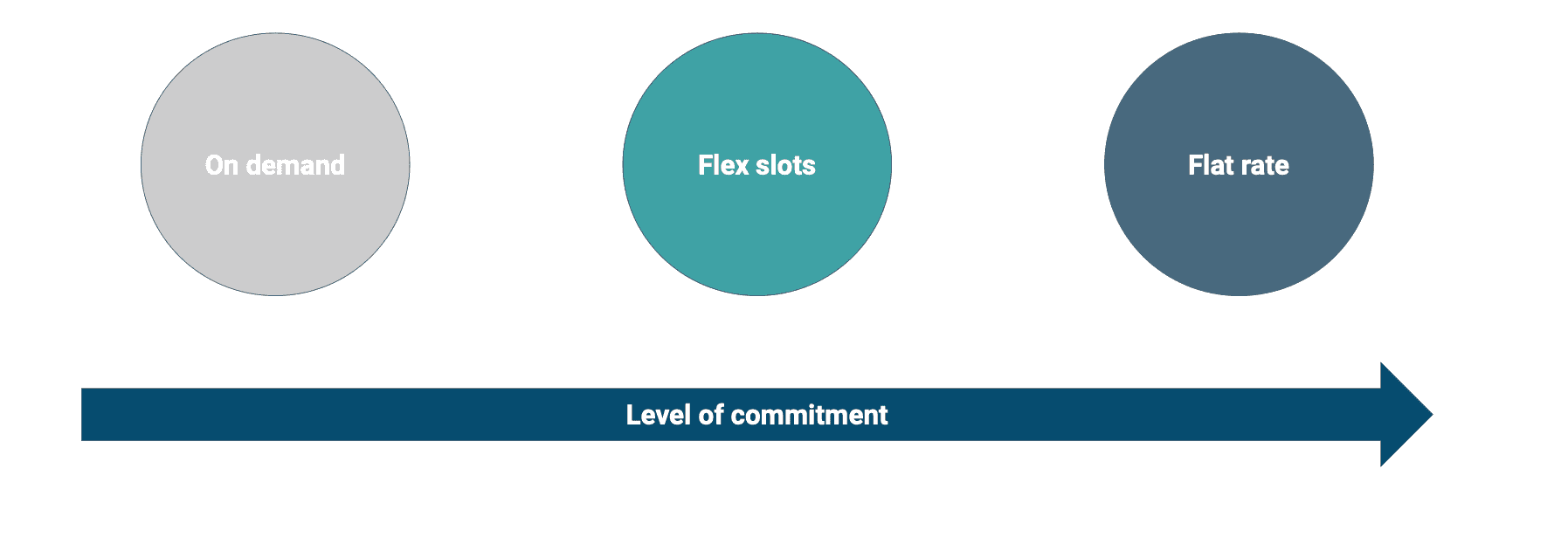

In BigQuery, you can choose one of a few pricing models:

On-demand mode: the more TBs the query scans, the higher cost will be. You use 2000 shared slots, and you are billed for the every TB of scanned data.

Flat-rate mode: you purchase slots, which are virtual CPUs. Slot reservation costs $2000 per month per 100 slots. You pay a monthly fee for the unlimited possibility of running queries. There is no charge for scanning data.

Flex-slots mode: you purchase slots for short durations and are only billed for the time used to deploy the Flex Slots, so you pay for what you consume without a monthly commitment.

BI engine: BI Engine is a fast, in-memory analysis service that integrates with BigQuery. By using BI Engine, you can analyze the data stored in BigQuery with sub-second query response time served from the cache, and you are billed per 1 GB/h of data stored in the memory.

Without knowing what query you're running or how complex your tables are, it's impossible to say which pricing model is the best option for you. In most cases, we should consider starting with on-demand mode and switching to flat-rate or flex-slots mode as the cost optimization because sometimes it’s hard to estimate how much data the queries will process. If you would like to estimate the costs of using BigQuery in detail in your organization, I encourage you to use the official calculator provided by GCP.

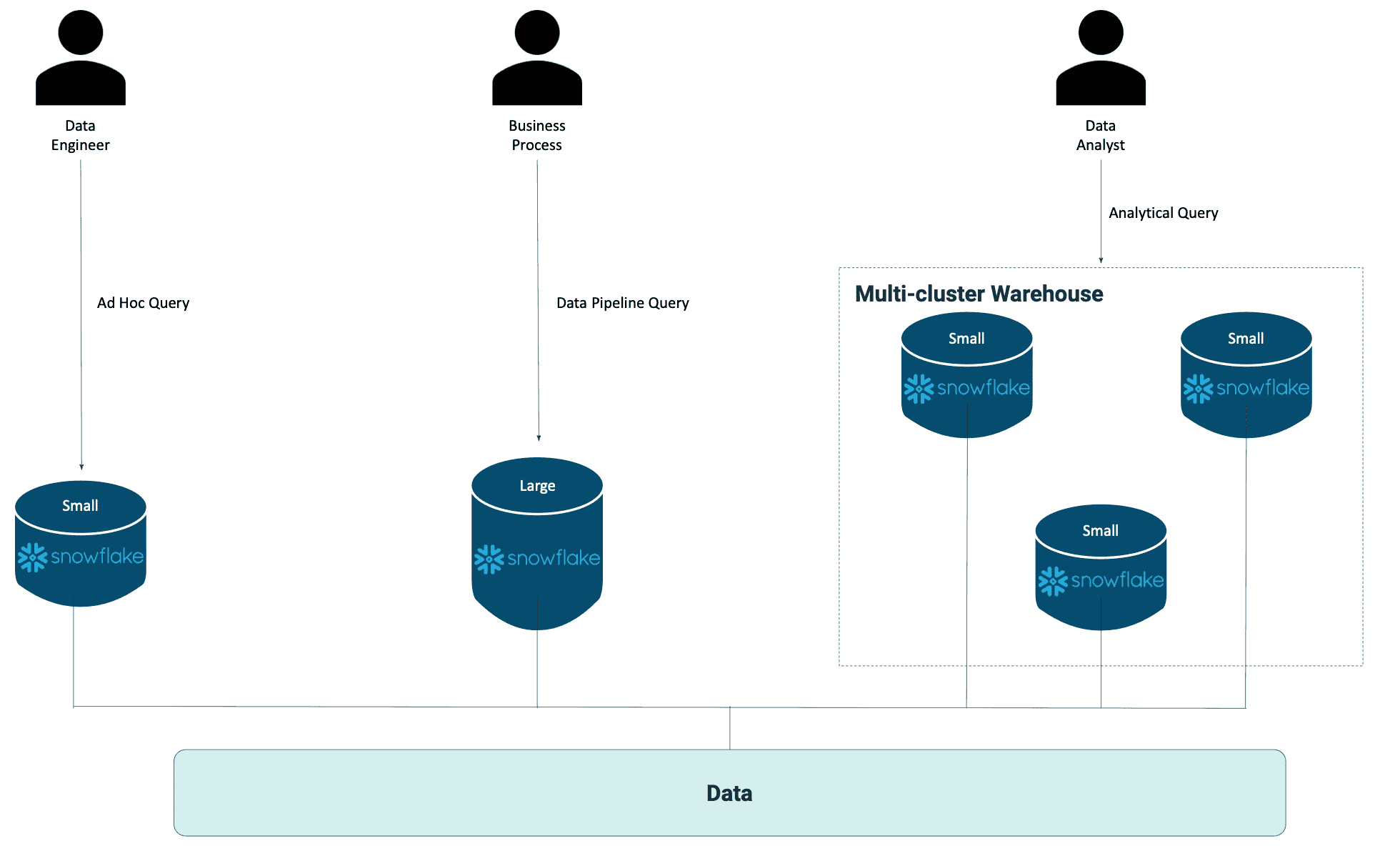

Snowflake makes it easy to set up multiple virtual warehouses for different use cases. It allows you to decouple your data and manage your resources and costs independently for each use case.

Snowflake's cloud-built architecture is designed for data-intensive computing at any scale. It gives you the flexibility to adjust the computing power for different business cases.

So, let’s take a look at the Snowflake pricing model in detail:

The virtual warehouse concept allows you to resize the computing resources on-demand to handle dynamically changing workloads without worrying about locking into a specific amount of computing resources. Therefore you can start small and pay as you grow your usage - there are no upfront commitments.

For example, consider a company that uses Snowflake for various data science tasks and business intelligence (BI) reporting. The company might set up two separate clusters — one cluster for analytics workloads and another cluster for BI reporting workloads — allowing the company to manage the capacity and costs separately for each cluster.

I hope this blog has cleared up one of the common questions about the Snowflake and BigQuery pricing models. The choice between Snowflake and BigQuery will depend on the organization's specific needs and usage patterns. Therefore, it is crucial to carefully evaluate the costs and capabilities of each platform before making a decision.

This blog post was prepared as a supplement to the ebook: “Power Up Machine Learning Process. Build Feature Stores Faster - an Introduction to Vertex AI, Snowflake and dbt Cloud”.

Get a free step-by-step guide covering all you need to know about Feature Store, including:

In this blog post, we will simply and clearly demonstrate the difference between 4 popular feature stores: Vertex AI Feature Store, FEAST, AWS…

Read moreStreaming analytics is no longer just a buzzword—it’s a must-have for modern businesses dealing with dynamic, real-time data. Apache Flink has emerged…

Read moreThe Data Mass Gdańsk Summit is behind us. So, the time has come to review and summarize the 2023 edition. In this blog post, we will give you a review…

Read moreIn today's digital age, data reigns supreme as the lifeblood of organizations across industries. From enabling informed decision-making to driving…

Read moreLearning new technologies is like falling in love. At the beginning, you enjoy it totally and it is like wearing pink glasses that prevent you from…

Read moreAI regulatory initiatives of EU countries On April 21, 2021, the EU Commission adopted a proposal for a regulation on artificial intelligence…

Read moreTogether, we will select the best Big Data solutions for your organization and build a project that will have a real impact on your organization.

What did you find most impressive about GetInData?