Deploying MLflow on the Google Cloud Platform using App Engine

MLOps platforms delivered by GetInData allow us to pick best of breed technologies to cover crucial functionalities. MLflow is one of the key…

Read moreTraining ML models and using them in online prediction on production is not an easy task. Fortunately, there are more and more tools and libs that can help us to accomplish this challenge.

In online ML Model predictions, one can meet the needs of high performance and low latencies. Python ML stack is great for training models with a large amount of ML libraries, but the execution speed is not the strong point of this language. Of course you can play with different Python interpreters (eg PyPy which supports JIT - like Java) but I dare to say that for most cases, an application in Java will be faster than an application in Python. Of course, before making any architectural decisions, doing your own benchmark would be more than recommended.

As far as I know, there are companies where applications are built on Java/Scala, yet the Data Science team uses Python. This brings integration challenges. How should you use magnificent ML modes which your DSs create with your services in production? One popular way is to wrap up models in containers and deploy them as a microservice. But what if it would be possible to use these models directly in your app?

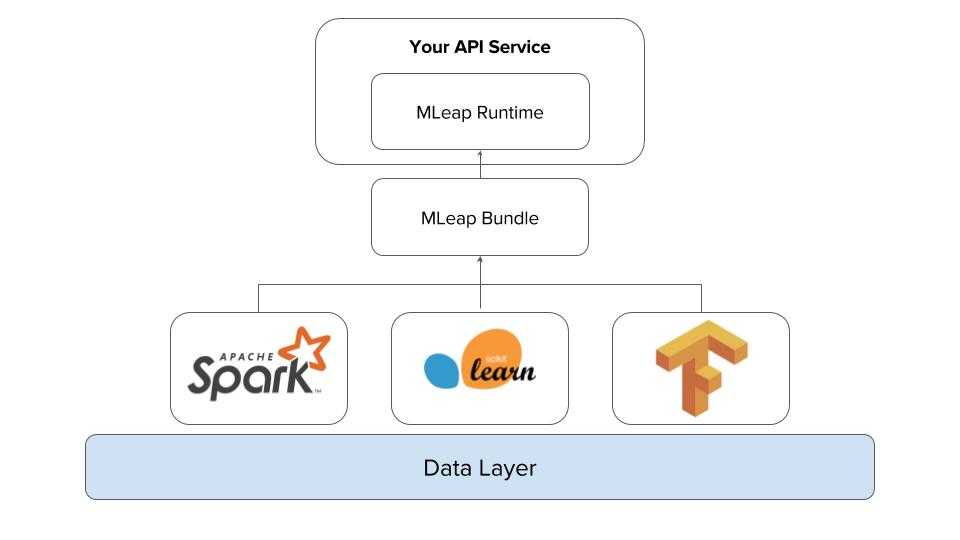

MLeap is a library which allows you to serialize your ML pipelines and provides an execution engine for them. So basically, you can export your trained ML models to file, reuse them in your applications and you no longer need dependencies required to train your models. Right now as we can read from official documentations (MLeap documenation - Gitbook) it supports Spark, Scikit-learn and Tensorflow.

MLeap Serving is a docker image which contains ready to use Spring Boot app: MLeap Serving - GitBook. It exposes REST endpoint to upload models and making predictions. Very handy tool to play with or even deploying to production if you don’t need any sophisticated customization.

Let's do some coding and check how simple it is to use MLeap and see what benefits it brings.

First, we need an ML model. I will use an example from the scikit website here.

However, instead of creating a simple tree I will change this example to use a random forest - check here

import numpy as np

import mleap.sklearn.pipeline

import mleap.sklearn.ensemble.forest

from sklearn.ensemble import RandomForestRegressor

from sklearn.pipeline import Pipeline

import time

# Create a random dataset

rng = np.random.RandomState(1)

X = np.sort(5 * rng.rand(80, 1), axis=0)

y = np.sin(X).ravel()

y[::5] += 3 * (0.5 - rng.rand(16))

for estimators in [1, 2, 4, 8, 16]:

# Fit regression model

regr = RandomForestRegressor(n_estimators=estimators, random_state=0)

# Definitions needed for bundle

input_features = "x"

output_vector_name = "output"

output_features = ["y"]

# Init mleap on regressor

regr.mlinit(input_features, output_vector_name, output_features)

# Create training pipeline

standard_scaler_pipeline = Pipeline([(regr.name, regr)])

# Init mleap on pipeline

standard_scaler_pipeline.mlinit()

# Fit pipeline

standard_scaler_pipeline.fit(X, y)

#Export bundle

standard_scaler_pipeline.serialize_to_bundle("/tmp", "mleap-example-" + str(estimators), True)

sum = 0

iter = 5

for j in range(iter):

start = time.perf_counter()

for i in range(100000):

standard_scaler_pipeline.predict(rng.rand(1, 1))

end = time.perf_counter()

sum += end - start

print(f"{end - start:0.4f} seconds")

print(estimators, sum / iter)*MLeap is working with pipelines that is why I changed the example with wrapping up regressor with pipeline.

Now let's check if the bundle exists:

ls /tmp/mleap-example-1/

bundle.json rootSuccess!

Bundle structure is quite informative and easy to understand. Also check out the following link: mleap-docs/mleap-bundles.md at master · combust/mleap-docs. It provides a lot of information about bundles. Documentation is always your best friend.

We will reuse the bundle from the Scala project. We will use MLeap Runtime which lets us make transformations using learned models (bundles). To do that we need dependencies:

libraryDependencies += "ml.combust.mleap" %% "mleap-runtime" % "0.17.0"Loading the bundle is very simple:

val bundle: ExtractableManagedResource\[Bundle[Transformer]] =

for (bf <- managed(BundleFile("file:/tmp/mleap-example"))) yield {

bf.loadMleapBundle().get

}We need to create the structure of the model input:

val schema = StructType(List(StructField("x",TensorType(BasicType.Double)))).getNext we can prepare the input row:

val dataset = Seq(Row(DenseTensor(Array(rand.nextFloat.toDouble), List(1))))

val frame = DefaultLeapFrame(schema, dataset)*LeapFrame is a basic structure in MLeap.

Finally let's make the prediction:

println(transformer.transform(frame).get.dataset.head(1))

// output: 0.7138256817142807It works, brilliant!

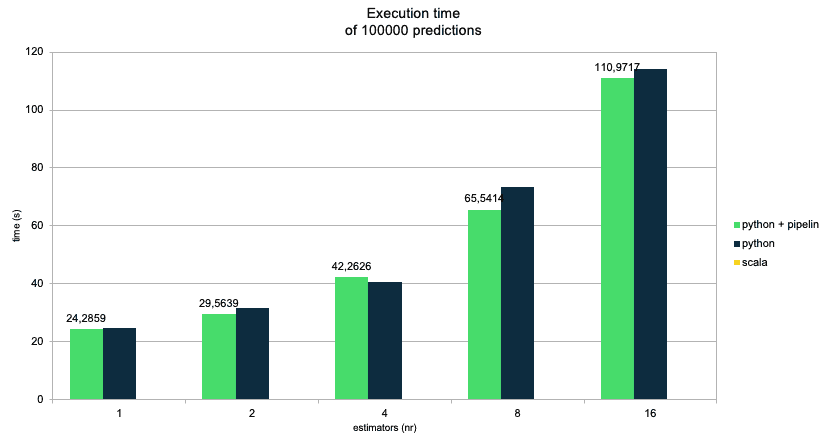

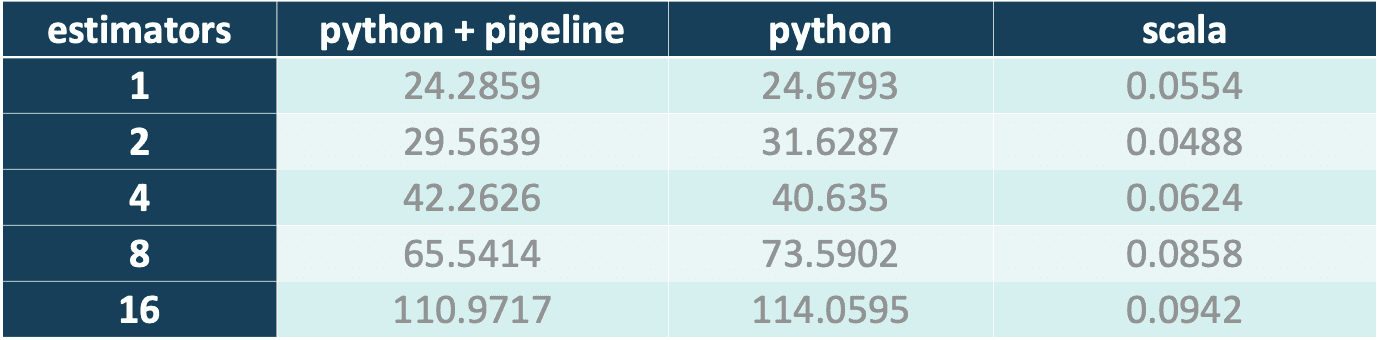

Now for the cherry on top. I will do a performance benchmark to check if I got any benefits from using MLeap. It will be a very simple one, but should provide a general view on the differences between serving models with Python vs Scala. As you remember, I added a pipeline to the regressor for clearer results. The benchmark will also contain the result from a model without a pipeline (so we can see if there is any overhead, and if that overhead is meaningful).

We have three cases:

For each of these cases I will run 5 rounds, each round with 100000 predictions made with a regressor. To check if the complicity of the forest affects the results I will train the model with a different number of estimators: {1, 2, 4, 8, 16}. For each prediction, random input is generated.

As we can see, Scala outperforms Python. For forest estimator=1, Scala has the average time of 100,000 predictions in around 0.05s, making 2 million predictions per second. Regarding Python, it gives us 4,000 per second, so it is 500 times slower than the Scala solution.

With an increase in estimators, the gap in performance just gets bigger and bigger.

I wasn't totally precise with the computations and rounded the numbers up, as the benchmark was done on a local machine. Even using round numbers, we can observe a huge difference in performance of compared solutions.

The benchmark was done on the following hardware and OS:

Processor: Intel(R) Core(TM) i7-8565U CPU @ 1.80GHz

Memory: 40741MB

Operating System: Ubuntu 20.04.3 LTS

Training ML models with Spark is great, especially when your training sets are too large for the memory of a single machine. On the other hand, serving an ML model is much harder. Of course you can do it in a batch-like manner, where you run the spark job which makes predictions and saves them to your DB for future use. There's a but. What about the online prediction? This is a problem which can be solved by using Mleap. It helps you to serialize models trained by Spark ML to the file (bundle) and later used in your application without having Spark dependencies and without a Spark cluster.

Recently we helped one of our clients to deploy an NSFW image classification project using MLeap. This worked really well because:

MLeap for sure is an interesting tool which can help to deploy your ML models to production. Especially if you are looking for a way to speed up your predictions or want to prepare a unified engine for making predictions using models trained by Scikit, SparkML and TensorFlow. One thing I am not totally thrilled about is MLeap project documentation, which could be more updated and provide more examples.

MLeap provides the flexibility to deploy with different approaches. You can prepare containers with your app and ML model and have an application ready to use. You can choose MLeap Serving and use the power of REST communication for loading/serving ML models. It is also possible to prepare a custom server based on MLeap Runtime and load your models from HDFS using the following module:

https://github.com/combust/mleap/blob/master/bundle-hdfs/README.md.

What is worth remembering is that MLeap is an engine not a whole platform for ML serving. So we won’t find any support here for tools like A/B testing.

Explore, Customize & Win!

---

Interested in ML and MLOps solutions? How to improve ML processes and scale project deliverability? Watch our MLOps demo and sign up for a free consultation.

MLOps platforms delivered by GetInData allow us to pick best of breed technologies to cover crucial functionalities. MLflow is one of the key…

Read moreOne of the biggest challenges of today’s Machine Learning world is the lack of standardization when it comes to models training. We all know that data…

Read moreStreamlining ML Development: The Impact of Low-Code Platforms Time is often a critical factor that can make or break the success of any endeavor. In…

Read moreQuarantaine project Staying at home is not my particular strong point. But tough times have arrived and everybody needs to change their habits and re…

Read moreWelcome to another Power of Big Data series post. In the series, we present the possibilities offered by solutions related to the management, analysis…

Read moreIntroduction At GetInData, we understand the value of full observability across our application stacks. For our Customers, we always recommend…

Read moreTogether, we will select the best Big Data solutions for your organization and build a project that will have a real impact on your organization.

What did you find most impressive about GetInData?