Data lakehouse with Snowflake Iceberg tables - introduction

Snowflake has officially entered the world of Data Lakehouses! What is a data lakehouse, where would such solutions be a perfect fit and how could…

Read moreA few months ago I was working on a project with a lot of geospatial data. Data was stored in HDFS, easily accessible through Hive. One of the tasks was to analyze this data and the first step was to join two datasets on columns which were geographical coordinates. I wanted some easy and efficient solution. But here is the problem – there is very little support for this kind of operations in Hadoop world.

Ok, so what’s the problem actually? Let’s say we have two datasets (represented as Hive tables). First one is a very large set of geo-tagged tweets. The second one is city/place geographic boundaries. We want to match them – for every tweet we want to know it’s location name.

Here are the tables (coordinates are given in simple WKT format):

+-----------+------------------+---------------------------------------------+

| tweets.id | tweets.content | tweets.location_wkt |

+-----------+------------------+---------------------------------------------+

| 11 | Hi there! | POINT(21.08448028564453 52.245122234020435) |

| 42 | Wow, great trip! | POINT(22.928466796875 54.12185996058409) |

| 128 | Happy :) | POINT(13.833160400390625 46.38046653471246) |

...

+-----------+-----------------+----------------------------------------------+

| places.id | places.name | places.boundaries_wkt |

+-----------+-----------------+----------------------------------------------+

| 65 | Warsaw | POLYGON((20.7693 52.3568,21.2530 52.3567 ... |

| 88 | Suwałki | POLYGON((22.8900 54.1282,22.9614 54.1282 ... |

| 89 | Triglav | POLYGON((13.8201 46.3835,13.8462 46.3835 ... |So how to do it in Hive or Spark? Without any additional libraries or tricks, we can simply do cross join, which means: compare every element from the first dataset with the element from the second one and then decide (using some user defined function) if there is a match.

But this solution has two major drawbacks:

For sure there must be a better way!

There are a few libraries which could help us with this task, but some of them give us only a nice API (GIS Tools, Magellan) where other can do spatial joins effectively (SpatialSpark). Let’s look at them one by one!

People from Esri (the international company which provides Geographic Information System software) developed and open sourced GIS Tools for Hadoop. This toolkit contains few elements, but the two most important ones are:

To install this toolkit you have to simply add jars to Hive classpath and then register needed UDFs. You can find more detailed tutorialhere.

Finally, you will be able to run Hive query like this:

SELECT * FROM places, tweets

WHERE ST_Intersects(

ST_GeomFromText(places.boundaries_wkt),

ST_GeomFromText(tweets.location_wkt)

);If you know Postgis (GIS extension for PostgreSQL) this will look very familiar to you, because the syntax is similar. Unfortunately, these kind of queries are very inefficient in Hive. Hive will do cross join and it means that for big datasets computations will last for the unacceptable amount of time.

There is a small trick which can help a bit with an efficiency problem when doing spatial joins. It’s called spatial binning. The idea is to divide our space with points and polygons to numbered rectangular blocks. Then, for every object (like point or polygon) we assign corresponding block number to it.

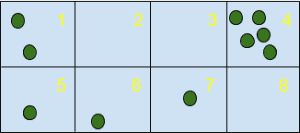

Here is (hopefully) helpful image:

In the above example, space was divided into 8 blocks, there are some empty blocks and some with many points. For example, there are 5 points which will get number 4 as their BIN ID.

Going back to our example with tweets (represented as points) and places (represented as polygons) we can assign BIN IDs to both of them and then join them block by block, calling UDFs only for objects with the same BIN ID. It will be more efficient because we will only do cross joins for significantly smaller sets (one block), but many of them (as many as the total number of blocks).

Of course, there are some corner cases (like borders of blocks), but the general idea is as explained. If you want to read more about this technique, please visit Esri Wiki.

The second solution I’d like to show you is based on Apache Spark – more powerful (but also a bit more complicated) tool than Apache Hive.

Magellan is open source library for geospatial analytics that uses Spark as the underlying engine. Hortonworks published a blog post about ithereand as far as I understand this library was created by one of the company’s engineers.

It is in a very early stage of development and as of this date, it gives us only nice API and unfortunately not so efficient algorithms for spatial joins.

Here is sample code in Spark (using Scala) to do spatial join using intersect spredicate:

// points and polygons are DataFrames of types magellan.{Point, Polygon}

points.join(polygons).where($"point" intersects $"polygon").show()It is definitely library to watch, but as for now, it’s not so useful in my opinion, mainly because it’s lacking features. If you want to know more, please visit Magellan github page.

Third solution and also my favourite one (maybe because I contributed to it a bit ;)) is SpatialSpark. It’s another library that is using Apache Spark as the underlying engine. For low-level spatial functions and data structures (like indexes) it is using great and well tested JTS library.

It’s selling feature is that it can do spatial joins efficiently. It supports two kind of joins:

Here is sample Spark code snippet to do broadcast spatial join for our case with tweets and places:

// create RDD with pairs (id, location_geometry) for tweets

val leftGeometryById : RDD[(Long, Geometry)] =

tweets.map(r => (r.id.toLong, new WKTReader().read(r.location_wkt)))

// right geometry (places) has to be relatively small for broadcast join

val rightGeometryById : RDD[(Long, Geometry)] =

places.map(r => (r.id.toLong, new WKTReader().read(r.boundaries_wkt)))

// we get matching ids from tweets and places

val matchedIdPairs : RDD[(Long, Long)] =

BroadcastSpatialJoin(sparkContext, leftGeometryById, rightGeometryById,

SpatialOperator.Intersects, 0.0)Unfortunately, there are also drawbacks. API is not so clean and easy to use. You have to use classes as shown in the example above or use command line tools that expect data in exactly one format (more details on github page). Even bigger problem is that development of SpatialSpark is not so active. Hopefully, it will change in the future.

If you can and want to keep data in some other systems than Hadoop there are few possibilities to do spatial joins. Of course, not all of them have the same set of features, but all of them implement some kind of geospatial search that could be useful when dealing with geographic data.

Here are the links:

As you can probably see now, there is no big choice in terms of spatial joins when we have our data in Hadoop. If you want to do things efficiently then SpatialSpark is the only option IMHO. If you want something easier to use then Esri GIS Tools for Hadoop is the way to go, but unfortunately, this only makes sense for really small datasets.

That’s all! Hopefully, you’ve enjoyed this post. Feel free to comment below, especially if you have a suggestion how our problem could be solved in a better way!

Snowflake has officially entered the world of Data Lakehouses! What is a data lakehouse, where would such solutions be a perfect fit and how could…

Read moreDeploying Language Model (LLMs) based applications can present numerous challenges, particularly when it comes to privacy, reliability and ease of…

Read moreData Mesh as an answer In more complex Data Lakes, I usually meet the following problems in organizations that make data usage very inefficient: Teams…

Read moreSince 2015, the beginning of every year is quite intense but also exciting for our company, because we are getting closer and closer to the Big Data…

Read moreThe adage "Data is king" holds in data engineering more than ever. Data engineers are tasked with building robust systems that process vast amounts of…

Read moreCan you build an automated infrastructure setup, basic data pipelines, and a sample analytics dashboard in the first two weeks of the project? The…

Read moreTogether, we will select the best Big Data solutions for your organization and build a project that will have a real impact on your organization.

What did you find most impressive about GetInData?