5 main data-related trends to be covered at Big Data Tech Warsaw 2021 Part II

Trend 4. Larger clouds over the Big Data landscape A decade ago, only a few companies ran their Big Data infrastructure and pipelines in the public…

Read moreIn more complex Data Lakes, I usually meet the following problems in organizations that make data usage very inefficient:

All these problems increase linearly with the scale of the organization. The more data is processed and more areas Data Analysts touch, the more difficult it is. Data Mesh tries to address the following problems. All assumptions in the approach can be divided into three pillars:

The most important Data Mesh assumption is decomposing data into domains. There is no single rule on how to approach it and it strongly depends on the core business and how the company operates on a daily basis. To illustrate the domain better, as an example we could imagine a store. For this kind of business, we could extract the following domains: customer, supplier, product and order.

Teams should be organized around selected domains and they should have strong ownership on data that they were assigned to. They should be responsible from end to end for all processes of collection data, transformations, cleaning, enrichment and modeling. To achieve that teams should be organized also vertically which means they should consist of all roles that are required to deliver data. This usually includes some of the following roles: DataOps, Data Engineers, Data Scientists, Analysts, Domain Expert. It is not necessary for all the roles to be full-time in a team. Sometimes there is not so much work to fulfill FTE for Data Engineers so it is also acceptable that technical people can work as a part of the data teams part-time. Rest of the time they can be part of a centralized technical team responsible for supporting and maintaining a platform. In this setup, it is important that their highest priority should be to meet the data team needs. Even though we work hard on isolation of teams at the same time we don’t want to create silos of data. Each team should be responsible for providing their prepared datasets to any teams downstream. Different teams can still use data from different domains for their purposes. Some data duplications are also acceptable.

According to me, the second most important rule is that the Data Mesh approach is treating data as a product. Data that concerns one domain is a product and every team is responsible for delivering from one to a few products. API/UI in this case are data, but not all of them. Very important aspect is hiding the internals of a product. All raw data and intermediate state shouldn’t be available outside. Only a presentation layer that contains modeled, enriched and clean data can be visible outside. Managing everything via code repositories gives also possibilities for other teams to propose and contribute to adjusting the presentation layer, but the owner team is always responsible for verification and making sure that changes are consistent with their directions. They are responsible for vision of how the data are delivered and should be used from the perspective of their domain.

Implementation of data processing pipelines and storing intermediate data are internals of a product. Because of that, theoretically, it could be implemented in any way. Practically, we are trying to unify tools and infrastructure to be common for an organization. It makes it easier to support, transit people between projects and introduce a new team member. I will write more about the technical aspects of the common platform in the next section. The presentation layer of each product, on the other hand, is available for the whole organization and it is crucial for introducing strict rules on how data is stored and made available to everyone.

Every team wants their product to be used but also wants to spend as little time as possible on supporting it. That’s why it is very important to introduce a standard way for data governance, especially data should be easily discoverable for anyone that is looking for them. By collecting metadata and introducing self-describing semantics and syntax it is possible to make data independently discovered, understood and consumed. Another important aspect is trustworthiness and truthfulness. No one wants to use data that they can't trust. Similarly as writing tests for applications data must be checked automatically by processes that assure the quality.

Even though teams are separated, they still use the same infrastructure under the hood. That’s why proper management of roles and permissions in the data ecosystem is very important. They should separate team responsibilities and hide their internals. It is possible to apply a rule from backend engineering: a database is like a toothbrush - should be used only by one application. Internals of data products should be available only inside the product and everything else can only have read permissions for the presentation layer.

To achieve the previously described set of rules it is required to build a platform that serves these needs. We need to select tools, integrate them and deliver in a way that less technical people can use. Data Mesh’s goal is to provide a platform that will be used by all Data teams without or with minimal support from other dedicated teams. Although processing pipelines are the internal complexity of data domains and are implemented internally by data teams the platform should provide tools and frameworks to be used in the easiest possible way to achieve that. We are aiming at teams to be self-sufficient and minimize Data Engineers' involvement in the daily of Analysts and Data Scientists.

Very important aspect in the creation of such an environment is automation. All possible actions like deployments or compilations should be automated transparently, out of the box.

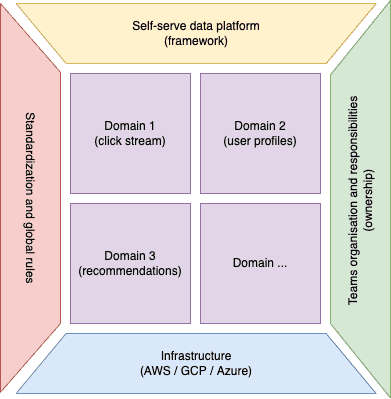

Below, a picture can illustrate the Data Mesh approach looking from above.

To sum it up, at the center we have identified, selected and well defined bounded contexts which are used to form teams around them. The teams with strong ownership obey standards and global organization rules in creating and delivering solutions (data products). Teams use a common platform with a set of tools to make their work easier. The platform is deployed/based on infrastructure which can be either on-premise or cloud-based.

Most Data Engineers, including me, originally come from backend development or at least are familiar with the Microservices concept. For these people, I think Microservices is the best analogy to explain Data Mesh. About 10 years ago the only officially known concept in building systems were monoliths. Time went by, applications grew and monoliths started to be unmanageable. It is very similar in the data world. Currently, still popular, data lakes started to acquire critical mass and are no longer manageable. They are like monoliths and the next step in evolution is Data Mesh. Similarly like in Microservices, Data Mesh promotes the separation of data into bounded context. Communication between bounded contexts should be based on rules and contracts (presentation layer). To be able to manage multiple separate products automation is necessary. Microservices usually use the same infrastructure, they are deployed either on Kubernetes or any other system that supports containers. In the Data Mesh approach, Data Products also uses the same infrastructure. It can be old and well known Hadoop, cloud based Google Big Query or platform agnostic Snowflake.

Trend 4. Larger clouds over the Big Data landscape A decade ago, only a few companies ran their Big Data infrastructure and pipelines in the public…

Read moreLearning new technologies is like falling in love. At the beginning, you enjoy it totally and it is like wearing pink glasses that prevent you from…

Read moreYou could talk about what makes companies data-driven for hours. Fortunately, as a single picture is worth a thousand words, we can also use an…

Read moreIn the first part of the series "Power of Big Data", I wrote about how Big Data can influence the development of marketing activities and how it can…

Read moreIn today's fast-paced business environment, companies are increasingly turning to real-time data to gain a competitive edge. One of the examples are…

Read moreThe 8th edition of the Big Data Tech Summit left us wondering about the trends and changes in Big Data, which clearly resonated in many presentations…

Read moreTogether, we will select the best Big Data solutions for your organization and build a project that will have a real impact on your organization.

What did you find most impressive about GetInData?