Big Data for E-commerce.

The year 2020 was full of challenges in many areas, and in many companies and organizations. Often, it was necessary to introduce radical changes or…

Read more

Flink is an open-source stream processing framework that supports both batch processing and data streaming programs. Streaming happens as data flows through the system with no compulsory time limitations in output. Flink is used in a lot of projects, and we can see it in action in rule-based alerting, anomaly detection systems, web applications with personalized content or personalization of the offer in e-commerce. It can be integrated with the Hadoop ecosystem and Kubernetes, or it can run stand-alone.

Kubernetes has become the major player in the containerization field. It provides a lot of advantages and new challenges - we can call it the next step of IT evolution. Its maturity and main features allow more and more services to become available and to be deployed directly on Kubernetes. Apache Flink is a great example of such a service. We can find multiple ways to install and run it directly on Kubernetes which provide better scalability, easier implementation of Continuous Integration and Continuous Deployment, easier version upgrades and container reuse.

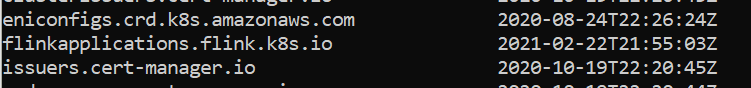

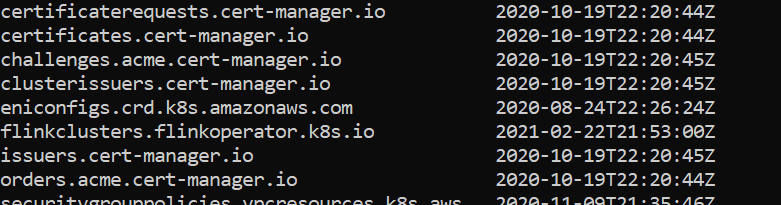

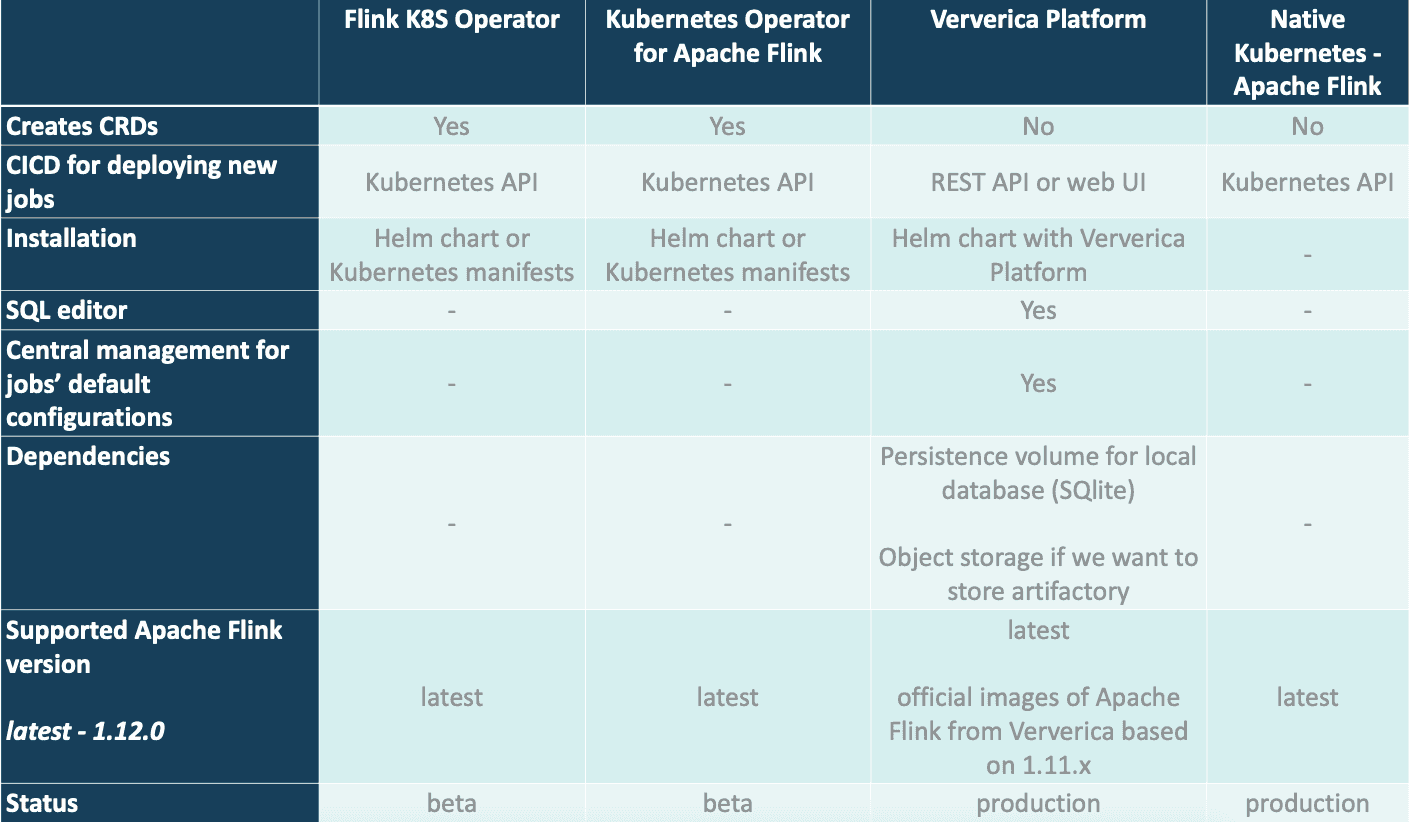

The most important difference between the solutions mentioned below is that they include different operators. Operators are software extensions to Kubernetes that make use of custom resources to manage applications and their components. Operators are used due to their flexibility (developers can create their own objects and simplify deployment) and offers the possibility to add custom logic, however operator preparation and validation is not simple - it requires a lot of work.

Note: Apache Flink by default exposes its own web UI with a description of the job, metrics, diagram of application, and information about TaskManagers.

Let’s start with monitoring. We can set up the exporter, like the Prometheus exporter, add annotations to our pods and start scraping metrics from all the pods on which we run Flink jobs. It’s quite simple when using Prometheus and Kubernetes service discovery feature where we can define target annotations.

The second thing is High Availability. We need to start by connecting Flink jobs to object storage or HDFS in high availability in which we store data and Flink can save its checkpoints and savepoint. Moreover, Flink requires additional service to run two JobManagers to provide a high availability setup - here we can go with connecting them to Zookeeper or using the Kubernetes service to manage the active Flink instance.

GitHub repository:

https://github.com/lyft/flinkk8soperator

This service is developed by Lyft and is a great example of software that is constantly being improved by the community. The operator acts as a control plane to manage the complete deployment lifecycle of the application. It is worth mentioning that the last version of this operator was released at the end of April 2020 with release v0.5.0. There is also a dedicated Slack workspace for the users. There is an annotation in the README file that the project is still in beta phase.

Installation and the upgrade process is quite simple. We can go with Kubernetes manifests or Helm chart - if we want to change something then we can go directly to GitHub to the fork repository and do what we want.

The CICD process is quite simple. We can use Kubernetes manifests or Helm charts with all updates and configuration there. Here, we use custom definitions managed by the operator, like the JobManager Deployment and Service, or TaskManager. If you have experience with deploying applications to Kubernetes, you'll know it is a smooth process. We can run additional checks to validate that our job has started successfully by calling the Flink job directly. This operator delivers blue green deployment that is a valuable feature. Blue-green deployment is a technique that reduces downtime and risk by running two identical production environments called Blue and Green. At any time, only one of the environments is live, with the live environment serving all production traffic - in this case we replace the old job with the new one when both are running and only one of them processes data.

The speed of submitting new jobs is not the best and it depends mostly on the amount of available resources - blue green deployment can take several minutes while some solutions described here are much faster.

Any configuration related to the job is managed in the same way, like in the YARN cluster, and it can be achieved by using templates with ConfigMaps stored in the repository. There are no problems with connecting Flink to object storage, kerberized components like Kafka or HDFS.

Advantages:

Disadvantages:

GitHub repository:

https://github.com/GoogleCloudPlatform/flink-on-k8s-operator

It is available in the Google Cloud repository but it’s worth mentioning that it’s not officially supported by the company from Mountain View. Talking about Operator, the installation of it is pretty straightforward and we can use Kubernetes manifests or Helm charts to deploy it in our environment.

It creates a custom resource definition FlinkCluster and runs a controller Pod to keep watching custom resources. Once a FlinkCluster custom resource is created and detected by the controller, the controller creates the underlying Kubernetes resource (e.g., JobManager Pod) based on the spec of the custom resource.

CICD pipelines are simple to build as we describe our Flink job within the Helm chart or Kubernetes manifest, so it's quite a typical setup for an application running in a containerized environment. Of course it’s important to check if a job is up by calling the Kubernetes API and getting the status of Flink’s pods and its object.

The latest release, version number 0.2.0, was added in September 2020 and it’s quite an early beta version. It provides the possibility to restart failed jobs from the last savepoint and we can also set up batch scheduling for JobManager and TaskManager pods.

Advantages:

Disadvantages:

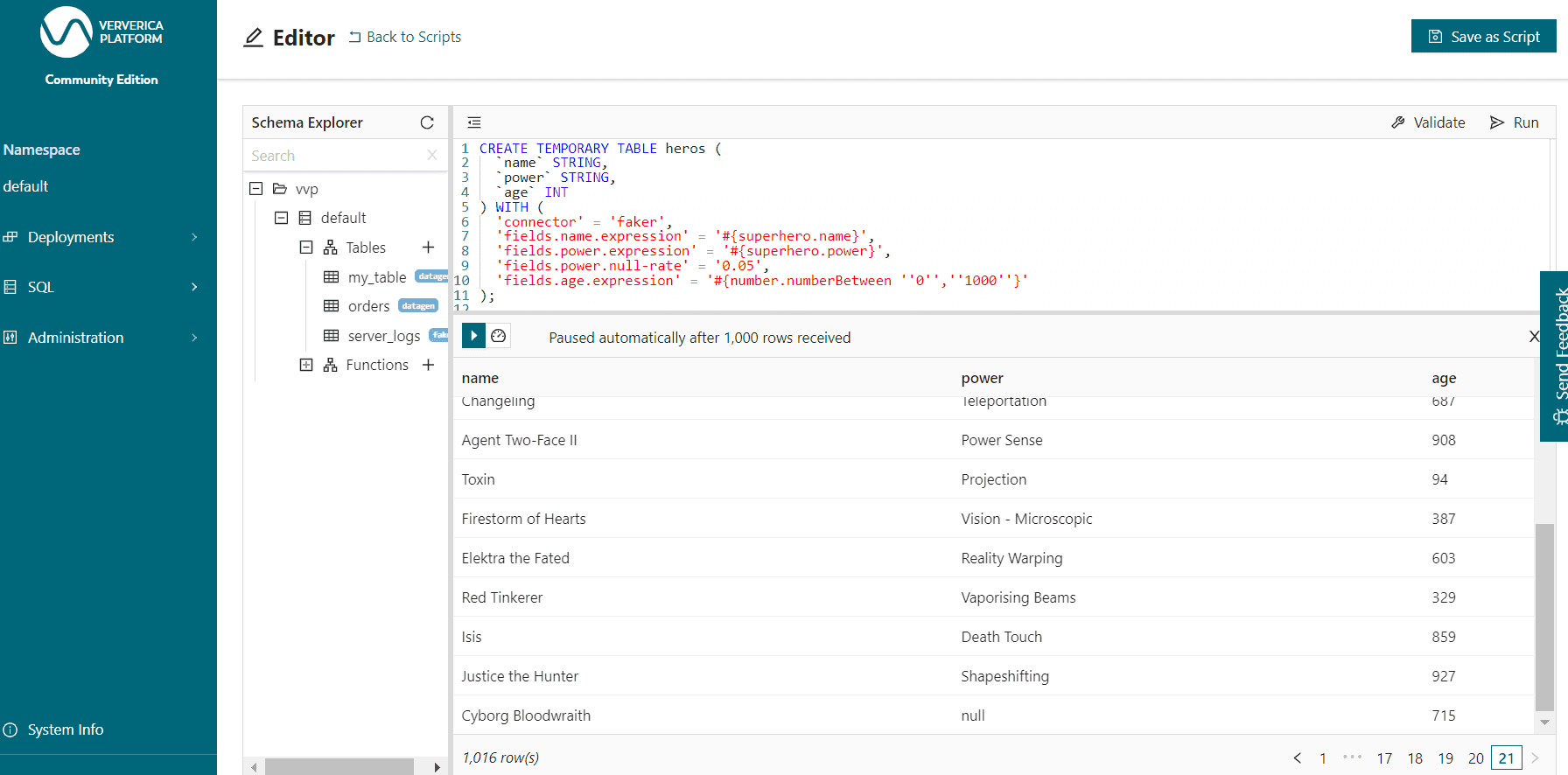

Ververica is a platform created by the team behind Apache Flink, so we can be sure that we are dealing with a product from an experienced company that understands Flink and how it works under the hood. One of the most unique features of Ververica Platform is a web dashboard in which the user can create deployment targets (add information about the name of target group and assign it to the chosen namespace), default configuration of Flink job, session clusters on which we can run Flink SQL queries or jobs; managing the lifecycle of our application and, moreover, there is quite a powerful REST API to manage job configuration, creating new jobs. Ververica applies new enhancements based on user feedback.

It is also a useful platform for running Flink SQL queries directly from Ververica and taking a look into the results from the UI instead of the terminal.

The CICD process requires using the REST API to which we send requests with configuration in YAML or JSON format so it can be easily implemented into any CICD tool like Gitlab CI, Jenkins or GitHub actions.

Installation of the Ververica Platform comes with its own SQLite database (it can be replaced with PostgreSQL, MySQL in the case of any version except the Community Edition) in which it stores all its data. It also simplifies configuration of external blob storage that can be used as the artifactory for Flink JARs as well as the place to store save points and checkpoints of jobs. It works with S3-compatible object storage as well as with kerberized HDFS.

The Ververica Platform is available as a free Community Edition which comes with a limitation of one namespace for jobs and no authentication; Startup Edition and Enterprise Edition. It’s worth mentioning that the authors are constantly improving their product and we can see frequent updates.

Advantages:

Disadvantages:

Documentation:

https://ci.apache.org/projects/flink/flink-docs-release-1.12/deployment/resource-providers/standalone/kubernetes.html

We can’t forget about the possibilities that are already available directly within Apache Flink. Since the release of 1.12.0 Flink has improved all aspects of running it in Kubernetes. It doesn’t require the addition of any CustomObjects or additional applications for managing Flink.

We have the following deployments in this case:

Native Kubernetes doesn’t introduce any operator and the installation is quite simple. Moreover, we are sure that it will be developed with adding enhancements to the service constantly as it is supported by a wide group of contributors of Apache Flink. Currently it’s really limited but in some cases it may be enough.

CICD process is the same as in any other application running in Kubernetes - we deploy everything straight from the CICD tool and we need to verify if the application is up or if it has any issues.

Advantages:

Disadvantages:

Based on our experience, we can recommend using Ververica Platform as the reliable solution for most use cases. It provides a fast way to run the Flink job - restarting the job with the new cluster is quite fast in comparison to, for example, Flink based on Lyft Operator and we can find some useful features like Flink SQL editor.

Surely, the choice of a perfect Flink operator depends on the exact use case, technical requirements and number of jobs. All the presented operators come from strong players in the Big Data market.

At GetInData you have access to 50+ distributed systems and cloud experts working with big data systems based on Apache Flink in multiple configurations. Do not hesitate to contact us, our team will be happy to discuss your real-time big data streaming project.

The year 2020 was full of challenges in many areas, and in many companies and organizations. Often, it was necessary to introduce radical changes or…

Read moreIt has been almost a month since the 9th edition of the Big Data Technology Warsaw Summit. We were thrilled to have the opportunity to organize an…

Read moreBig Data Technology Warsaw Summit 2020 is fast approaching. This will be 6th edition of the conference that is jointly organised by Evention and…

Read moreThe Data Mass Gdańsk Summit is behind us. So, the time has come to review and summarize the 2023 edition. In this blog post, we will give you a review…

Read moreThe 2024 edition of InfoShare was a landmark two-day conference for IT professionals, attracting data and platform engineers, software developers…

Read moreBig Data Technology Warsaw Summit 2021 is fast approaching. Please save the date - February 25th, 2021. This time the conference will be organized as…

Read moreTogether, we will select the best Big Data solutions for your organization and build a project that will have a real impact on your organization.

What did you find most impressive about GetInData?