GetInData Becomes Xebia: A New Chapter Begins

We’re excited to share an important milestone in our journey - starting July 1, 2025, GetInData will officially operate under the name Xebia Poland…

Read moreIn recent times, Machine Learning has seen a surge in popularity. From Google to tech startups, everyone is rushing to use Machine Learning to expand its position in the market. However, not all organizations have the know-how and resources to deploy machine learning at a large scale. Furthermore, as we know from Uncle Ben (the uncle of Spiderman), “Great power is a great responsibility”, so Big Data is a great opportunity to increase business, yet also comes with a huge responsibility in dealing with the risks of not reaching its full potential. So today I want to highlight and explain 5 Machine Learning problems resulting in the ineffective use of data.

One of the most significant areas of society's progress has been the increased amount of available data, resulting in the expansion in applying artificial intelligence methods in practice. In this way, data has become fuel for the modern economy and initiated the fourth industrial revolution. Accessing this fuel on demand is critical to any organization that relies heavily on automated data-driven decision-making business processes. As a result, companies using big data technologies rush to find new ways to extract business values from their data.

AI opened up a spectrum of opportunities for companies that they previously could only dream of. Therefore, companies are racing to create faster and more accurate ML models, which allow them to optimize their business processes and gain a competitive advantage. However, the constant need to train, implement, monitor and improve models creates a big challenge for engineering teams, especially when processing a large amount of data and making automated decisions in real-time.

MLOps is responsible for optimizing and maintaining the maximum effectiveness of Machine Learning. MLOps is a set of practices which are the solution to ML challenges.

So, to the point. What are the 5 areas in the Machine Learning process where the implementation of MLOps is crucial?

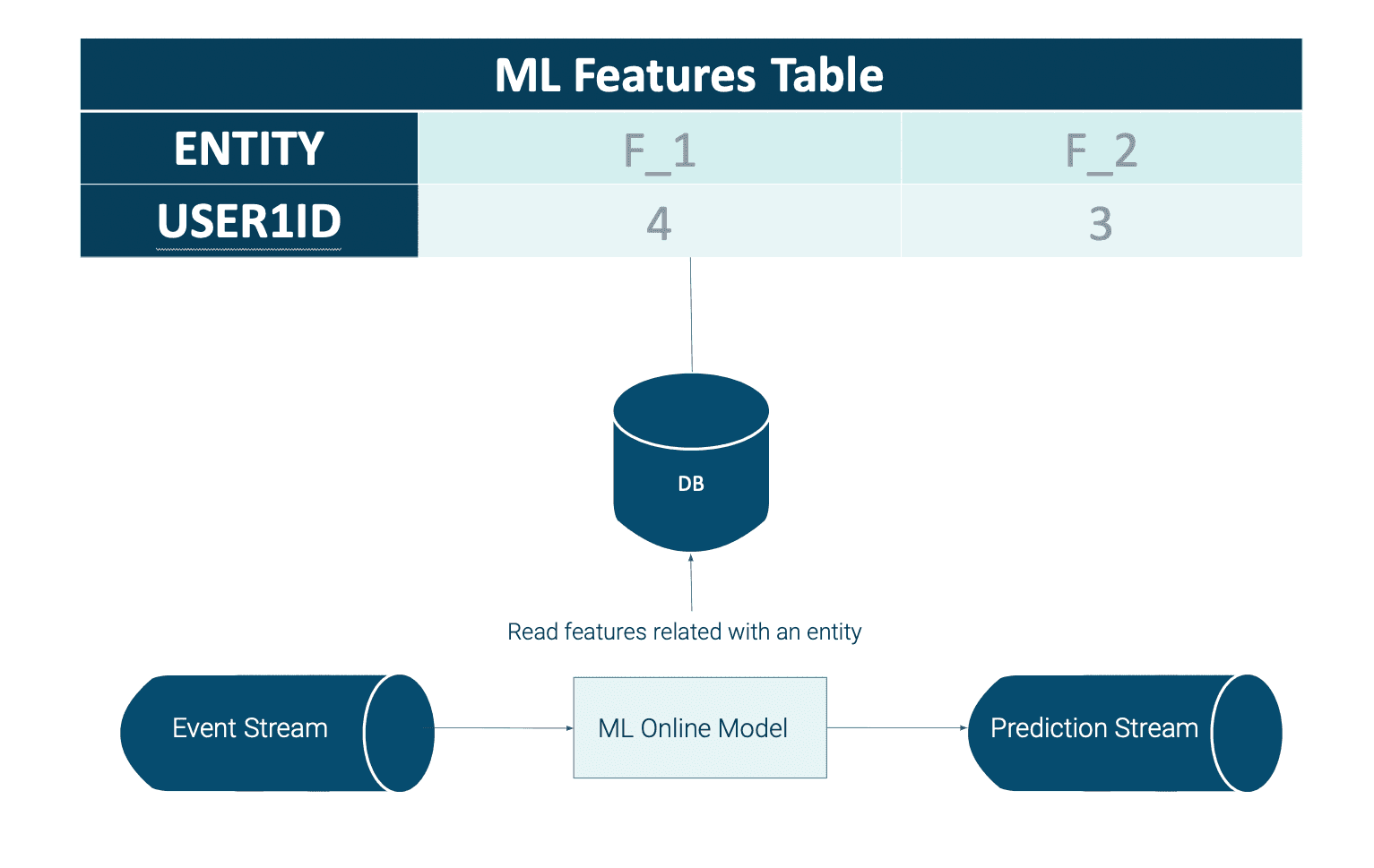

The first area at risk of loss of effectiveness is the process of making online predictions.

Let's assume that we are building a system to detect financial fraud for a bank. One of our system's elements is a service that uses an ML model to analyze loan requests and decide whether or not we are dealing with fraud. When the request comes, the service must ask the database for the current data of a given user such as "scoring", "number of loan requests in the past seven days", "average income", etc. We say that this data is our user's features in the Machine Learning terminology.

As we can see, the ML model requires knowledge of the latest facts about the user to make the correct prediction. The seemingly simple activity of enriching data raises several Machine Learning problems that we have to deal with:

Certainly, when designing a real-time, AI-driven decision process in the big data world, architects should ask these questions.

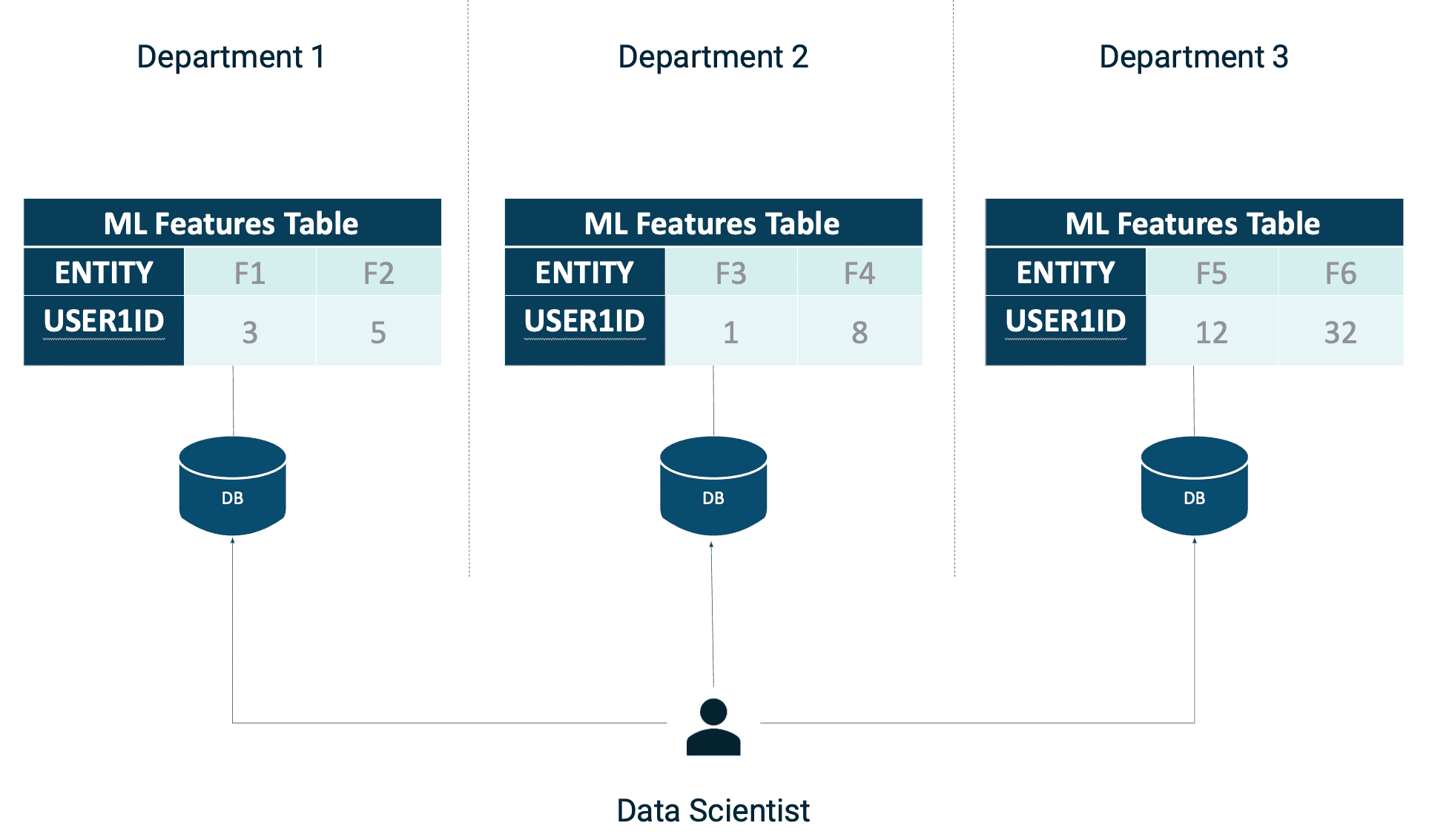

Any organization that wants to implement a data-driven approach in their business processes knows how vital data warehousing is in the enterprise architecture. Unfortunately, many companies keep their data in warehouses scattered around different departments. This way of data storage creates data silos, which have a negative impact on the daily work of the IT department.

When data scientists want to retrieve data of interest, they must search through datasets located in many warehouses in different locations. This is very inconvenient and causes problems such as:

As a result, your organization is less efficient, you don't innovate quickly enough, and you can't make the most of your data. Certainly, simple and universal access to data is crucial for all enterprises that want to keep up with the rapidly changing world.

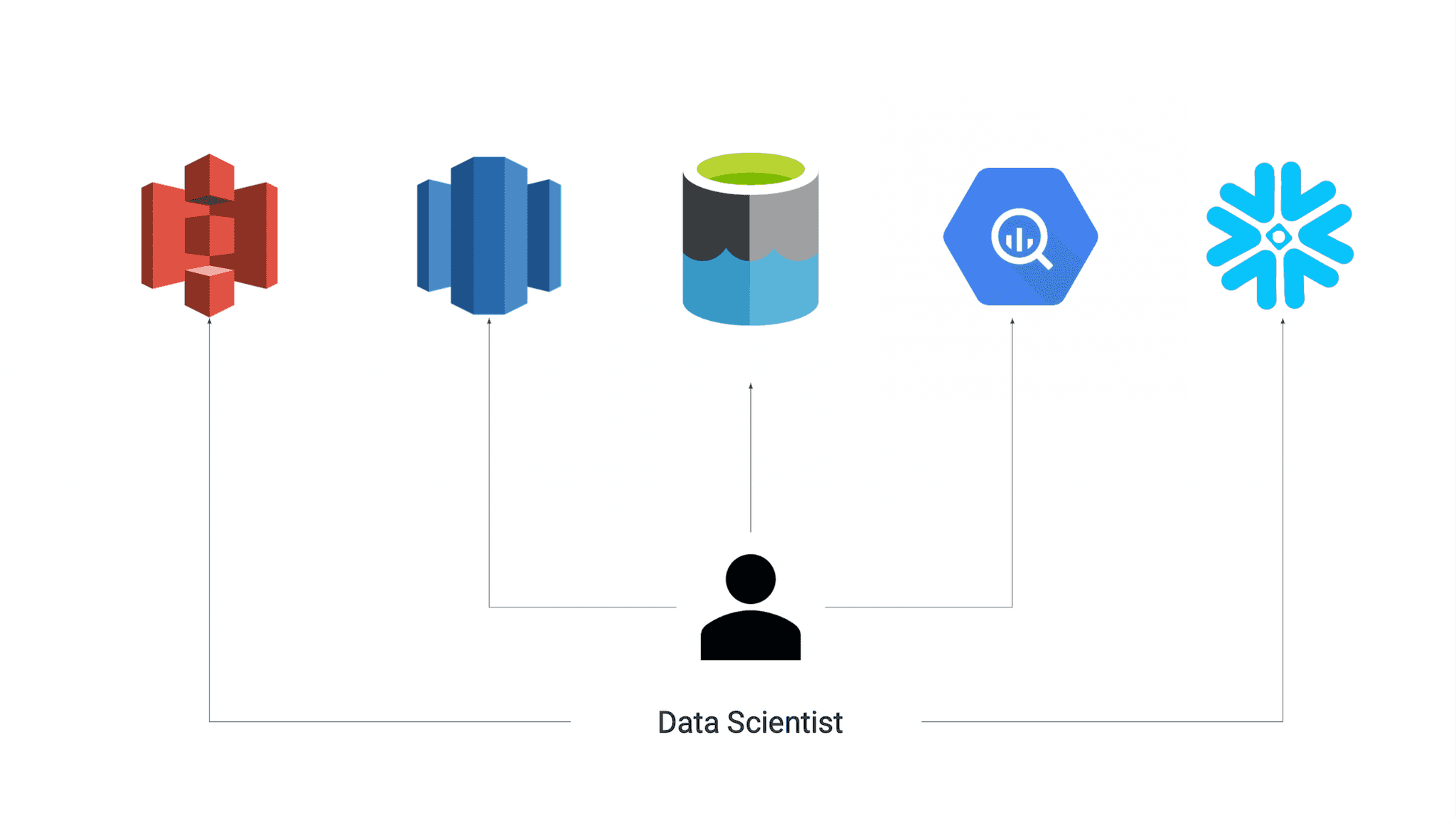

In the modern approach to application design, it is common practice to select the database that best suits the problem being solved. In Machine Learning we call this approach a polyglot database pattern. The polyglot persistence pattern uses two or more data storage technologies for different data types. For example, you use MySQL for relational data and Redis for fast caching. When using polyglot persistence, you can choose the database that best suits your performance, scalability, and security needs.

However, a polyglot database poses new challenges in supporting applications that use different database technologies. Primarily when working in a multi-cloud environment, where each cloud provider offers its warehouse as a service:

I think I managed to draw your attention to the most common problems of using different technologies for storing data. However, it is essential to remember that each tool has its strengths and weaknesses, so we should always choose the right tool for the problem.

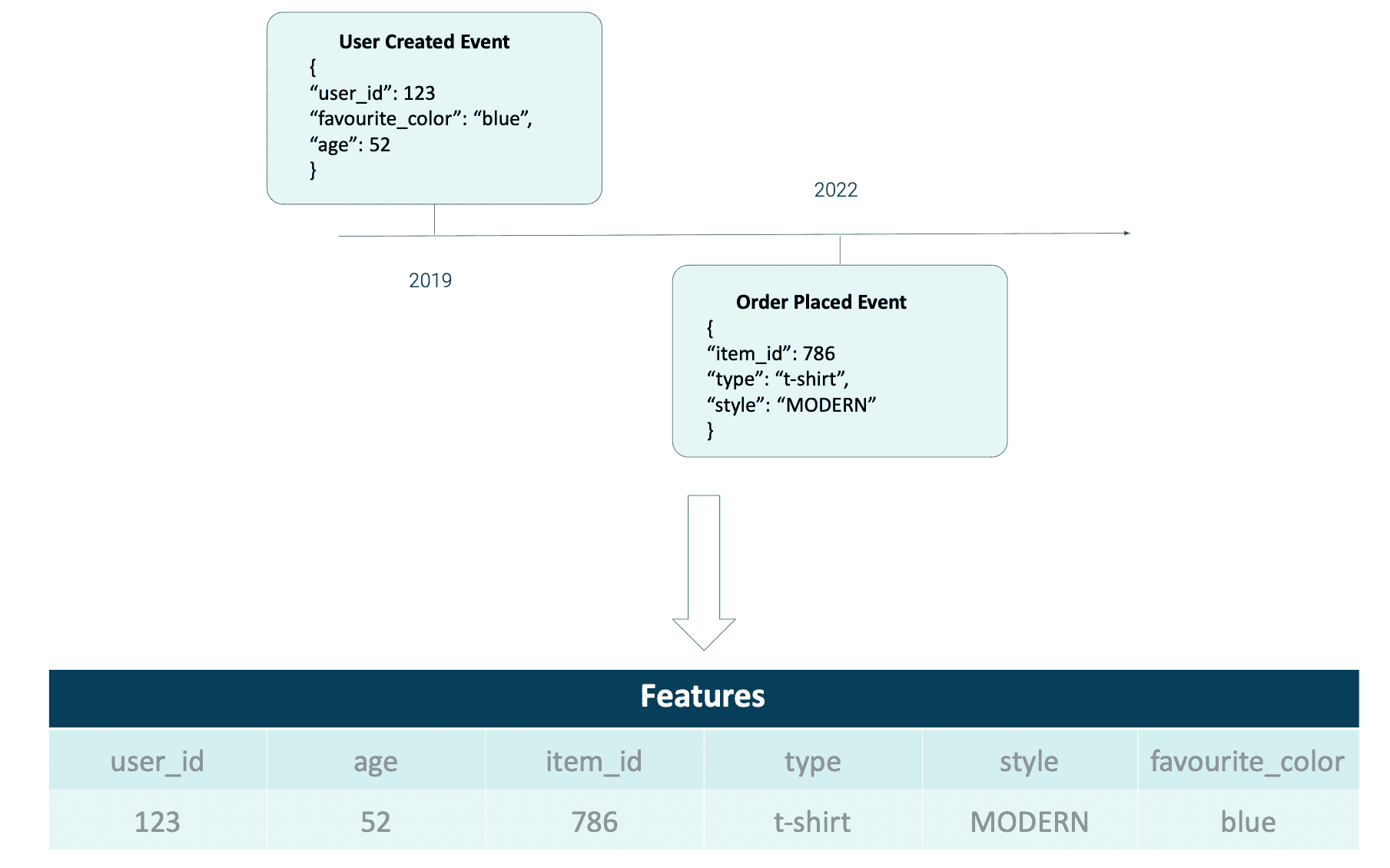

When it comes to data analysis and prediction in Machine Learning, one of the most important tasks is creating a dataset used for training and testing. Unfortunately, it isn't as easy as many people think. Especially when we need to track changes in the domain in time. For example, let's assume we are working on an ML model for targeted email campaigns for online store users. We have a stream of events with information about the user, such as age, favorite color and events related to orders placed in the past.

We want to create a view of our domain to combine information about users with their orders. The seemingly simple operation turns out to be more complicated. We can't join events because our domain is changing over time. We need to know the domain's state from the exact moment each event occurred, in order to join events. For example, a user who was 52 in 2019 is 55 years old in 2022. When building ML models on past data, it is essential to remember that the world has changed since that data was collected:

Extracting features for ML models from the data streams is what enterprises face today. As data volume and velocity continue to increase, enterprises need to find ways to manage their growing volumes efficiently on a big scale.

For each feature used for training ML models, its expected range of values and distribution should be recorded. Then, if someone builds a model that uses this feature as an input, they should also store information about how much each feature influences this model output. This information can be used to monitor features for unexpected changes in value or distribution that may invalidate assumptions made during modeling.

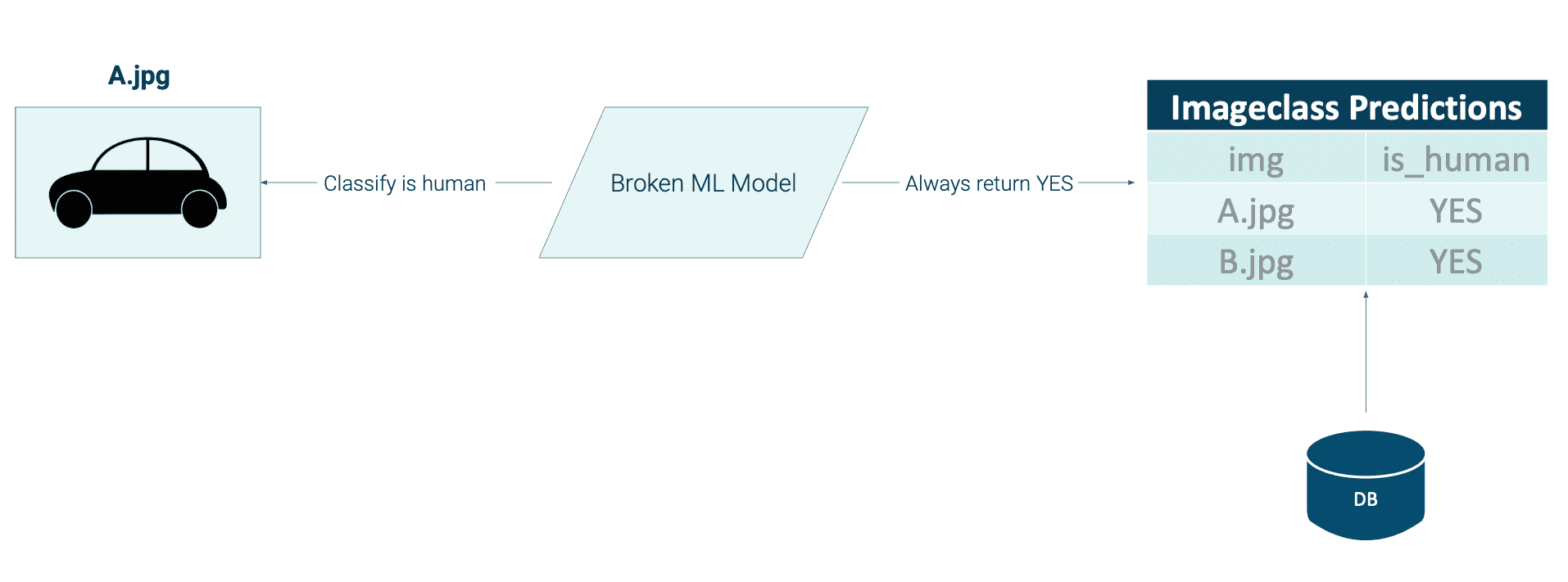

If the value of a feature changes significantly over time, then the model performance could suffer. As an extreme case, if a corrupted ML model generates this feature, this model will no longer work.

The first step for any company that wants to implement MLOps into its business process successfully should be developing a monitoring system. Only then should they start developing algorithms and integrating them into their systems. Understanding the importance of data monitoring will allow you to save a lot of resources and successfully implement AI-based solutions.

As you see, there are many areas where Machine Learning problems can cause a decrease in the company's efficiency and a slowdown in expansion in the market in which the company is competing. Big Data gives fuel to gain an advantage as long as we are able to not lose efficiency. MLOps role is to find the area causing ineffectiveness and apply solutions and practices to eliminate the problem. So the company can reach its full potential on the market by being able to make data-driven accurate decisions and defining trends at once.

Interested in ML and MLOps solutions? How to improve ML processes and scale project deliverability? Watch our MLOps demo and sign up for a free consultation.

We’re excited to share an important milestone in our journey - starting July 1, 2025, GetInData will officially operate under the name Xebia Poland…

Read moreWe are proud to present you our first e-book, created by GetInData specialists. Apache NiFi: A Complete Guide is the result of long and fruitful work…

Read moreAbout In this White Paper we described use-cases in the aviation industry which are the most prominent examples of Big Data related implementations…

Read moreWelcome to another Power of Big Data series post. In the series, we present the possibilities offered by solutions related to the management, analysis…

Read moreApache NiFI, a big data processing engine with graphical WebUI, was created to give non-programmers the ability to swiftly and codelessly create data…

Read moreIn this blog post, you will learn what medallion architecture is, the characteristics of each layer of this pattern and how it differs from the…

Read moreTogether, we will select the best Big Data solutions for your organization and build a project that will have a real impact on your organization.

What did you find most impressive about GetInData?