Power of Big Data: Sales

In the first part of the series "Power of Big Data", I wrote about how Big Data can influence the development of marketing activities and how it can…

Read moreReal-time data streaming platforms are tough to create and to maintain. This difficulty is caused by a huge amount of data that we have to process as quickly as possible, while the system needs to be online all the time. We face up such challenges in Getindata and we know how to overcome them. Which things are crucial and what can provide almost perfect stability?

We should start from the basics. It may be mundane, surely. We all know that monitoring has to be deployed in the cluster. We would say having a monitoring system and looking into servers’ metrics are only the first steps into a better Big Data world. Used services, the amount of created logs, understanding the business value of each component, knowledge of important metrics - we need to start from scratch and decide which information will be useful. We use such information for adding triggered actions like deleting old logs if the amount of available disk space goes low. Additionally, alerts are must.

Prometheus is a great tool for storing all metrics. Wethinkit is the perfect choice for many projects. Many services have metrics exported to it and many more can be easily createdif one knows how to program in any programming language. We have tested it in multiple environments and it never fails. Another challenge is how exported metrics are stored. By default Prometheus time series databasedoes not provide durable long-term storageand it is only viable as a short-term storage. If we need something more durable we may consider other available solutions such as Thanos, CrateDB, InfluxDB, M3DB or TimescaleDB.

Checking our services is only achieved by scraping their metrics. Nowadays we have more ways to verify if everything is OK and we should take advantage of it. Especially log reading systems may be useful. We should analyze their design and how many logs we should store. Many people use Elastic stack with installed Filebeat or Fluentd as the data source but there is one more flexible solution designed for the containerized environment.Here we would like to mention Loki with Promtail. We use it in production environment and it provides all the required information, and we really the feature of adding structure to unstructured logs. Moreover, everybody can check logs in Grafana. All scraped logs are labeled Prometheus-stylewhich is especially important during filtering events. Recently Loki achieved the v1.0 release and we can say thatthe v1.0 release shows the same solid stability that we have observed before.

People are responsible for many failures and issues we encounter in the Big Data world. We believe it is the main reason why we should automate all tasks and use tools that can be described by the phrase ease-of-use.

Let’s discuss available services. I’d recommend starting with Ansible which is well-documented andsupports writing own libraries. It can be used not only for creating infrastructure but also for deploying Flink jobs or adding a new partitions to Kafka. We highly value designing everythingas-a-Codein GetInData. It provides reusability without issues, with automated testing and execution.

That is only the code. If we had an application with GUI it would be great, wouldn’t it?

Here, we use Rundeck. We can not only add jobs triggered by events or built-in crontab, but it can also be used by the GitLab CI pipeline. We really enjoy creating pipelines that combine all the required tools, where every action can be done with one click. Also, Jenkins is a great choice for achieving automatized operations, and we still use it for some cases.

It is crucial to make all tools simple. If we automate theboring stuff, many potential issues will be prevented and users will be happier. It is the real DevOps world not only adding some well-known services and do some operations manually.

Source: Gds-Gov

Source: Gds-Gov

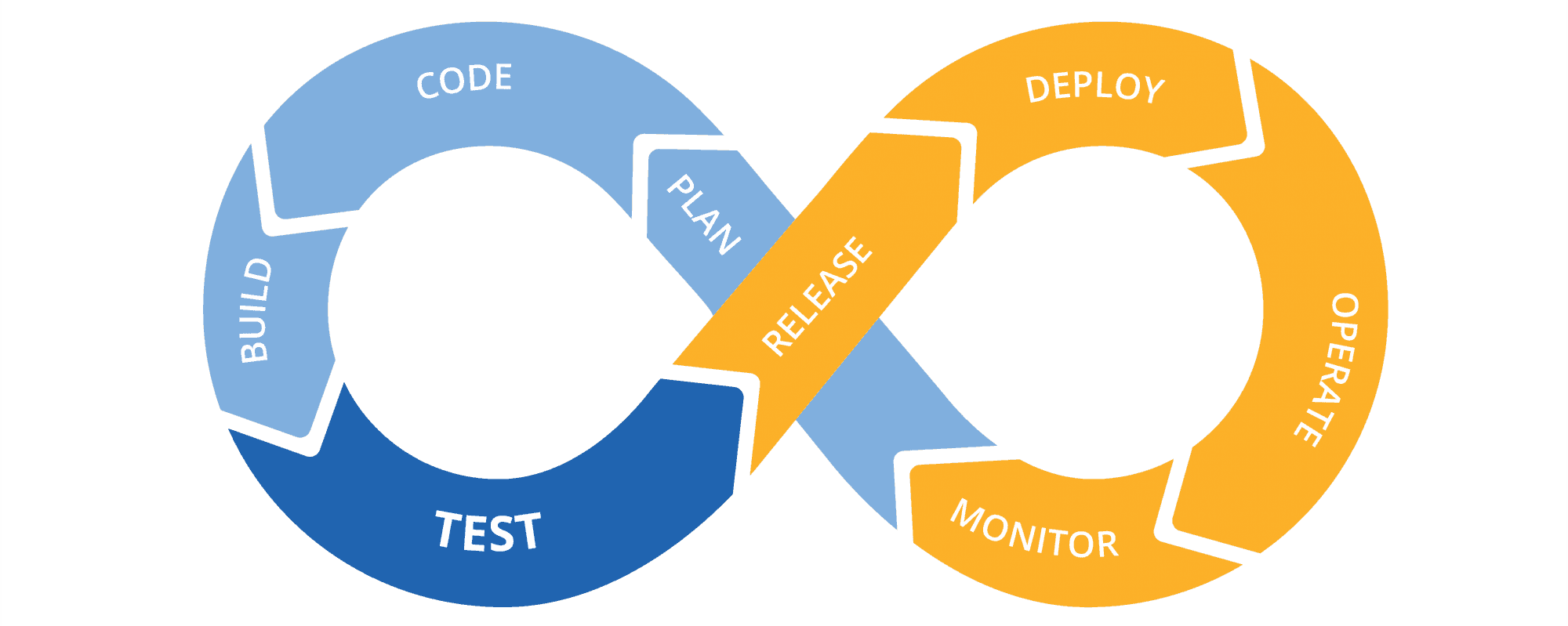

DevOps has become a real buzz-word. But wait, what is DevOps?

We like the definition made by AWS team:DevOps is the combination of cultural philosophies, practices, and tools that increases an organization’s ability to deliver applications and services at high velocity: evolving and improving products at a faster pace than organizations using traditional software development and infrastructure management processes.

We would say it describes all aspects of DevOps. Using these practices is not recommended only to the admins. Developers should also follow some DevOps rules because it is crucial to make the whole team follow this philosophy. The advantages are quite impressive if we implement it in the right way: improved quality, reliability, and reusability of all components, standardized processes for easy replication, increased productivity of IT team. It reduces costs and time. So, how to achieve it?

Some parts of DevOps mindset were described above. We should start from understanding implemented data pipeline. What does the deployment process look like and how can it be improved. Of course, writing documentation shouldn’t be forgotten. Then we can start making the Great DevOps Plan and implement needed actions like using automation tools or triggered actions. Users should be taught what it means and why they should start using Rundeck instead of command line.

The IT world is evolving. Everyone knows it is one of the fastest changing environments and it is as fascinating as it is challenging. It means that we need to carefully look into all updates - verify if they are good or not and decide if we should install them in our cluster. Reading the documentation, forums, others’ opinions and testing everything in the development environment are amust-have.

We need to plan our work for next months. It is really helpful for understanding the most important things for users and here we should take advantage of code reusability and earlier prepared tools like Ansible playbooks. It can save a lot oftimeand money, and prevent running into many bugs. It requires neverending learning and improving but that is the only way to maintain a stable data platform. We would say it is especially important in case of real-time data streaming platforms, where all the jobs have to run all the time. All operations should have as small as possible impact on data pipeline.

Frankly saying, that is the target of DevOps.

It is a tough task to say that we finish our work. New updates appear all the time, new things come up and it creates new opportunities to improve our environments. Having solid infrastructure is the key to apply all changes smoothly and without any impact on the most important data pipelines. Here we can check how DevOps mindset is important and how it may improve each process.The next step will be the implementationof some machine learning algorithms for detecting issues based on logs. Recently IBM has prepared something similar for Prometheus. Surely, the project is in its early stages but it may become useful one day.

We presented this theme on the 40th meetup of Warsaw Data Tech Talks, you can find the presentation here.

In the first part of the series "Power of Big Data", I wrote about how Big Data can influence the development of marketing activities and how it can…

Read moreWe would like to announce the dbt-flink-adapter, that allows running pipelines defined in SQL in a dbt project on Apache Flink. Find out what the…

Read moreIt was epic, the 10th edition of the Big Data Tech Warsaw Summit - one of the most tech oriented data conferences in this field. Attending the Big…

Read moreLast month, I had the pleasure of performing at the latest Flink Forward event organized by Ververica in Seattle. Having been a part of the Flink…

Read moreA data-driven approach helps companies to make decisions based on facts rather than perceptions. One of the main elements that supports this approach…

Read moreSQL language was invented in 1970 and has powered databases for decades. It allows you not only to query the data, but also to modify it easily on the…

Read moreTogether, we will select the best Big Data solutions for your organization and build a project that will have a real impact on your organization.

What did you find most impressive about GetInData?