Success Story: Fintech data platform gets a boost from stream processing

A partnership between iZettle and GetInData originated in the form of a two-day workshop focused on analyzing iZettle’s needs and exploring multiple…

Read more

One of the main challenges of today's Machine Learning initiatives is the need for a centralized store of high-quality data that can be reused by Data Scientists across different models. Tools that fulfill that gap are named Feature Stores, and you can read about them in fantastic Adi’s post: What are Feature Stores and Why Are They Critical for Scaling Data Science?

Many companies deploy Feature Store according to their needs, but one of the most popular, open-source implementations is Feast. Feast recently joined LF AI&Data Foundation as a reference solution to store features by:

For one of the projects I’m working on in GetInData, Feast was selected as a backend behind the feature store. The core components installation allows users to register, browse and update features definition using Python SDK, but this is not the most user-friendly interface - it lacks a full text search API and ability to collaborate on the features (like adding and editing descriptions). And the only gateway to the data is Python REPL or Jupyter, so it requires some coding skills to access the data.

“With 1.2k stars on github there must be an UI somewhere” - this was my first thought. Unfortunately, the project name is not super-unique, so entering “feast ui” in google doesn’t provide very meaningful results. But it leads to some interesting insights:

I tried to revive the old UI, but without success. At this point in time, I was really close to starting the development of the UI myself, but decided to try another way first.

Amundsen is a data discovery tool that collects metadata from your databases, pushes them to internal Neo4j graph database and Elasticsearch and exposes using a nice, interactive frontend. The tool is widely adopted in Lyft, ING and many data-oriented projects supported by GetInData. Using the web portal users can search for the data they are interested in, assign tags, mark datasets as “starred” and even edit descriptions of the tables and columns. What is also interesting, it is part of the LF AI&Data as well.

Usually, you use Amundsen to load the structure from your database (using databuilder) into database-oriented format and structure with:

At first glance, the structure doesn’t really fit the Feast schema, as the structure there looks like this:

But, still, users can imagine these data in a tabular form with this mapping:

With this mapping in mind I was finally able to try Amundsen as a Feast user interface!

Implementation of Amundsen extension to scrap the Feast for the metadata turned out to be a straightforward task. Databuilder concept defines the Extractor as a simple class that generates the objects with metadata one after another, so a few calls using Feast’s Python SDK solves it completely. Recently, my implementation was merged into Databuilder master, so you can try it yourself! The job that does all the job can be defined as in the sample script.

Apart from features and entities, the Extractor pushes data exposed by Feast:

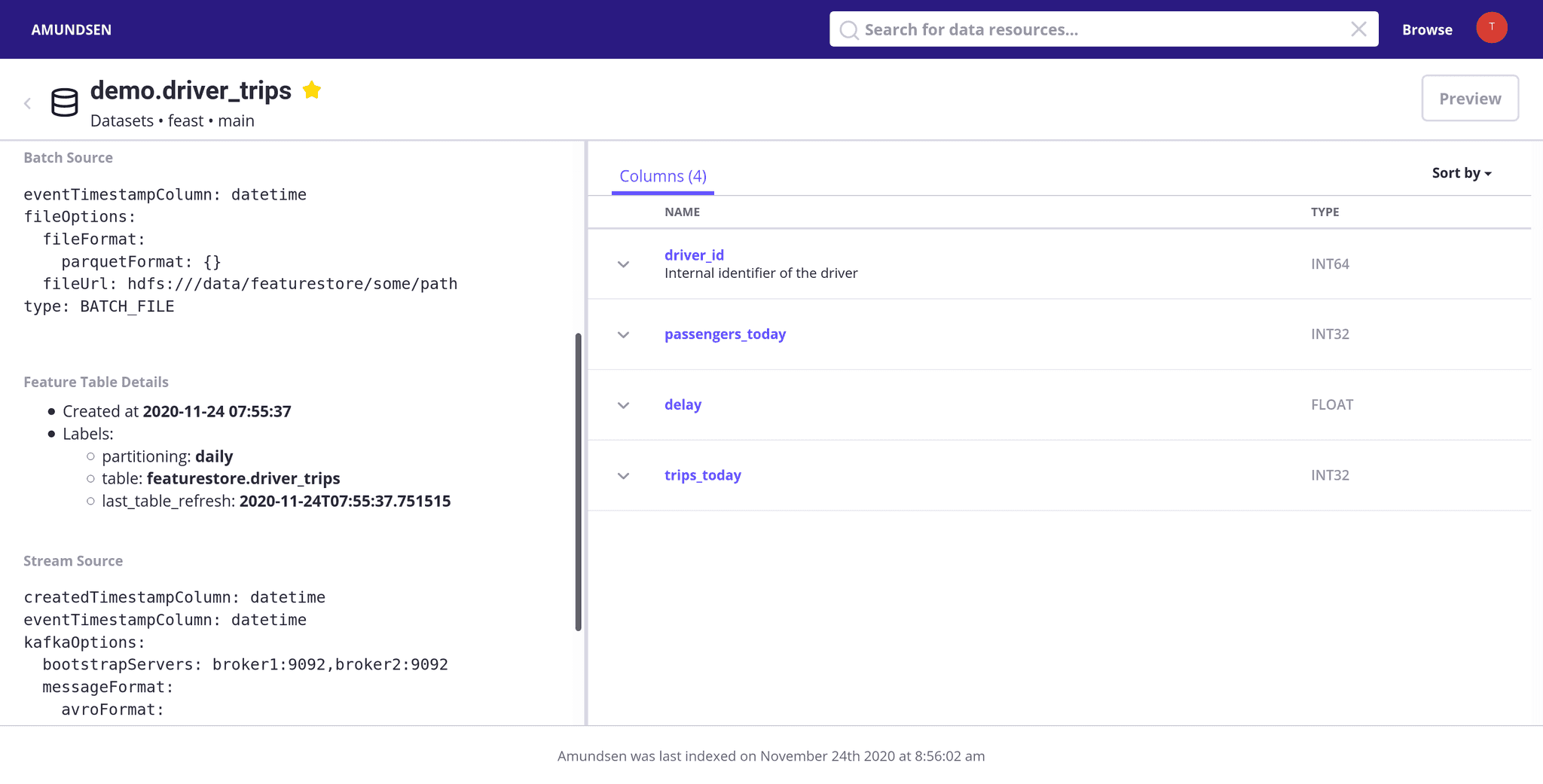

On the Frontend it looks as follows:

On the left, you can see all the information extracted from Feast, on the right list of entities and features in the tabular form.

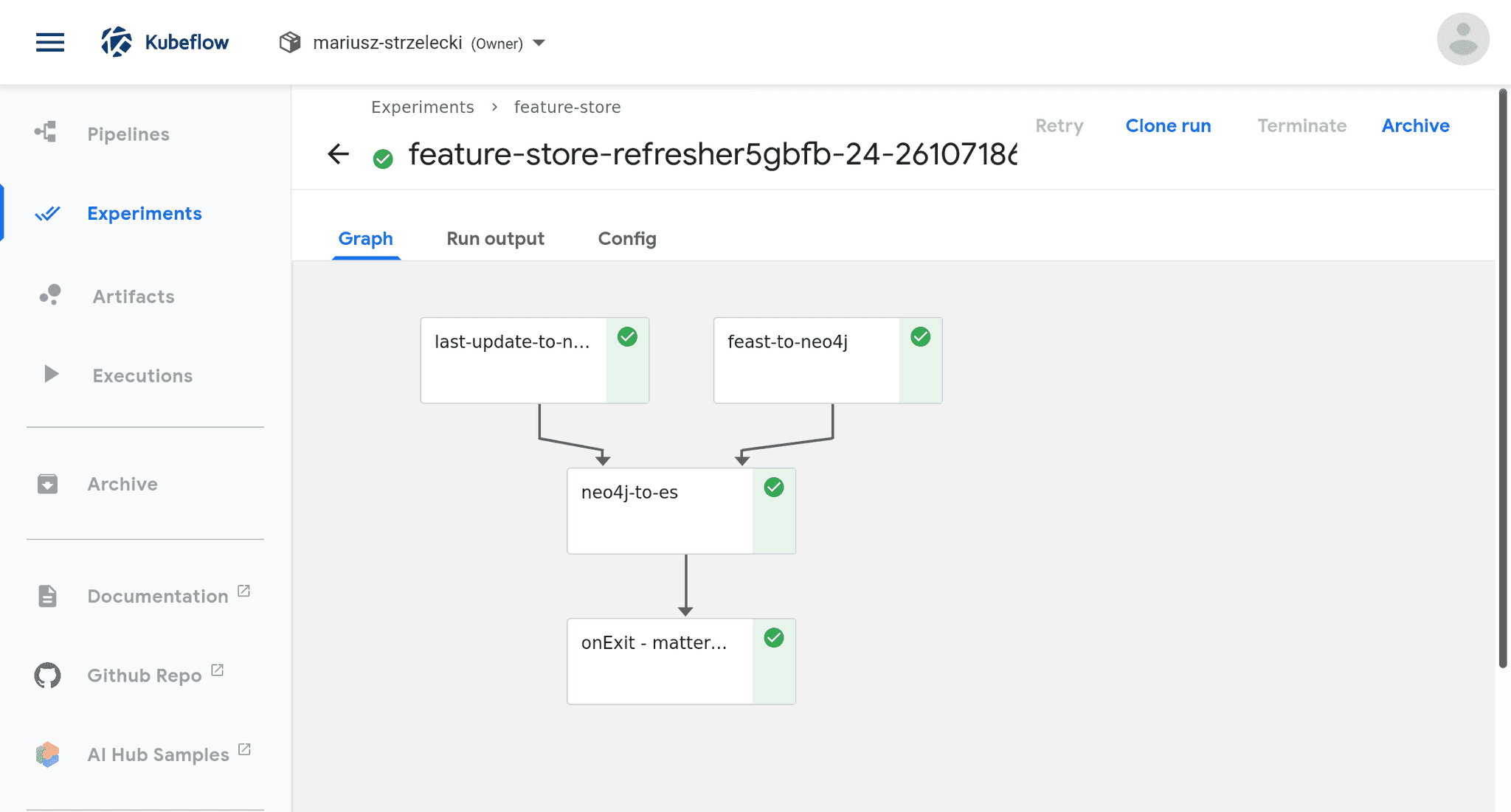

The process to extract the data from Feast to Amundsen runs every hour in form of Kubeflow Pipelines scheduled workflow:

Amundsen’s frontend solves all the requirements that I had for a Feast UI:

If you plan to use Feast, but you’re a bit afraid of the lack of user interface, definitely try Amundsen with FeastExtractor. Both projects are supported by LF AI&Data, so they are not going anywhere soon. And, to be frank, seeing how these two can support each other just blew my mind ;-)

If you're looking for a company to help you scale and operationalize your ML efforts with tools like Feast and Kubeflow, just write to us.

A partnership between iZettle and GetInData originated in the form of a two-day workshop focused on analyzing iZettle’s needs and exploring multiple…

Read moreWith the introduction of ChatGPT, Large Language Models (LLMs) have become without doubt the hottest topic in AI and it doesn’t seem that this is…

Read moreThe client who needs Data Analytics Platform ING is a global bank with a European base, serving large corporations, multinationals and financial…

Read more2020 was a very tough year for everyone. It was a year full of emotions, constant adoption and transformation - both in our private and professional…

Read moreA year is definitely a long enough time to see new trends or technologies that get more traction. The Big Data landscape changes increasingly fast…

Read moreWill AI replace us tomorrow? In recent years, there have been many predictions about what areas of our lives will be automated and which professions…

Read moreTogether, we will select the best Big Data solutions for your organization and build a project that will have a real impact on your organization.

What did you find most impressive about GetInData?