Alert backoff with Flink CEP

Flink complex event processing (CEP).... ....provides an amazing API for matching patterns within streams. It was introduced in 2016 with an…

Read moreNowadays digital marketing is a competitive business and it’s easy to tell that we are way past the point when a catchy slogan or shiny banner would guarantee your campaign a success. What matters now? Quick peek at the leaders in the area will tell you that it’s not only what are you selling but most of all how smart are you with targeting customer groups and their need. To be able to achieve that you need insights and that’s where data analysis kicks in.

It’s obvious that systems around Digital Marketing are fuelled by data, tons of it, and they devour it like monster trucks. Data toolset for mobile advertising surely operates under heavy load, but does it mean the solutions need to fall under XXL label? We’ve asked ourselves the same question, aiding one of marketing companies on building custom data solution for that purpose. We’ve aimed at lightweight, agile and cost effective solution.

Broadly speaking, digital advertising comes down to delivering ads to media slots on a publisher’s property via ad exchanges and so called Demand Site Platforms (DSPs for short). These solutions allow to target an audience at predefined conditions that help to optimally allocate an advertising budget. At first launch, targeting is often based on external insights or even educated guesses. The goal of targeting is to unveil useful insights of the audience reaction monitoring, as it allows to pinpoint the segments that are performing well or get a more in depth knowledge of the audience that will not only allow to improve the current campaign configuration, but also to support future decision making.

GetInData’s involvement was support and implementation of systems for collecting audience reactions data in a curated easily managed datastore for cold analytics and BI as well near real-time audience segmentation and classification.

We are distinguishing two groups of audience reaction events:

Impressions - each time the ad is displayed on the user’s device.

Conversions - each time the ad is clicked by a user.

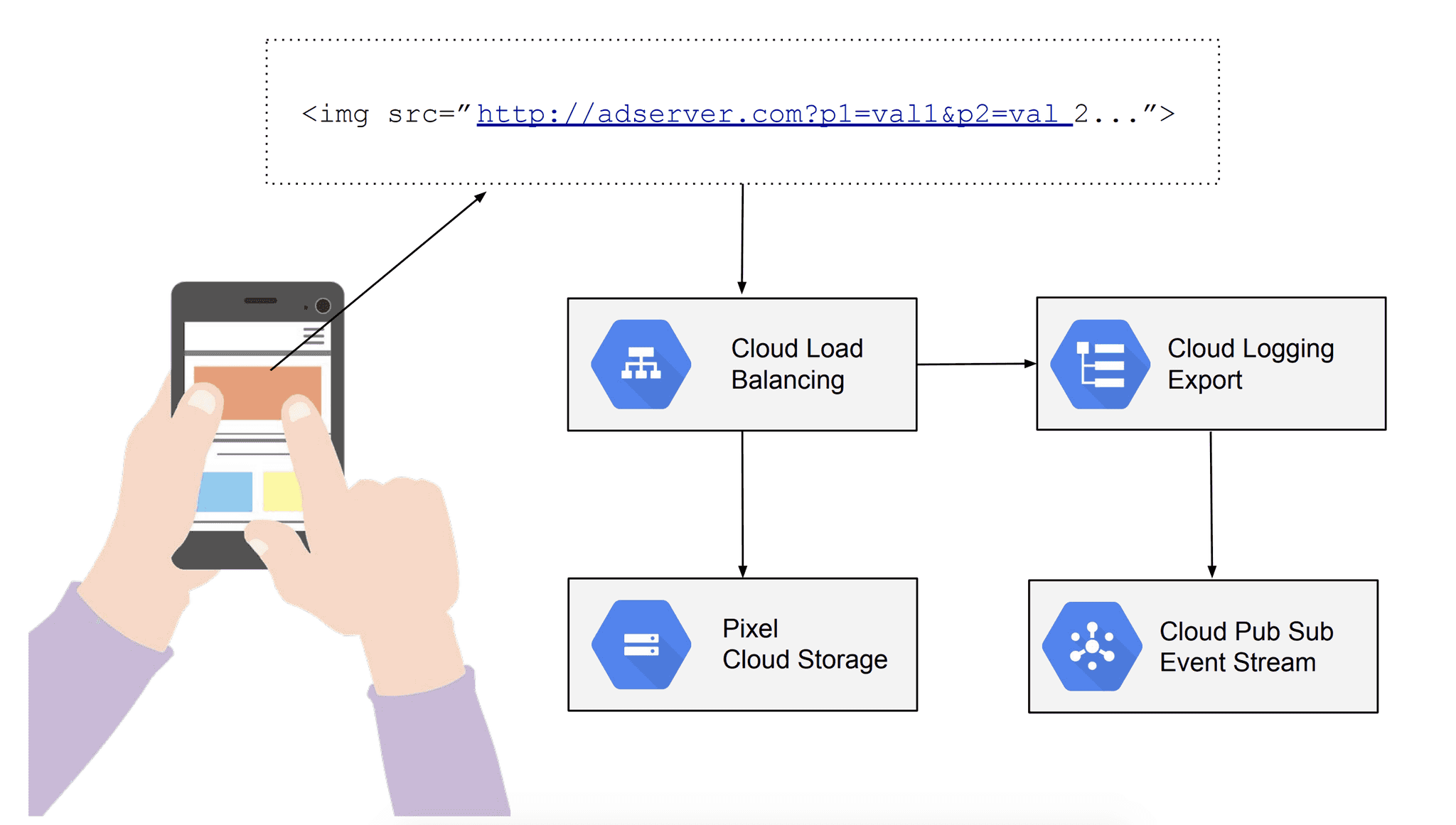

To gather impression events we’ve used a simple strategy called pixel tracking, which relies on adding an invisible pixel to the media element that is displayed. Such pixel is usually programmatically generated, has a specific source URL where additional data provided by DSP is passed on in a form of query parameter. In the described system, the pixel itself is stored on Google Cloud Storage and exposed through Cloud Load Balancer. Upon rendering the media, the HTTP GET request is issued to load balancer to retrieve the pixel. Each request is tracked in Google built in logging service, which export functionality can be configured to proxy each call as an event to message queue service called Pub/Sub.

Impressions collection

Impressions collection

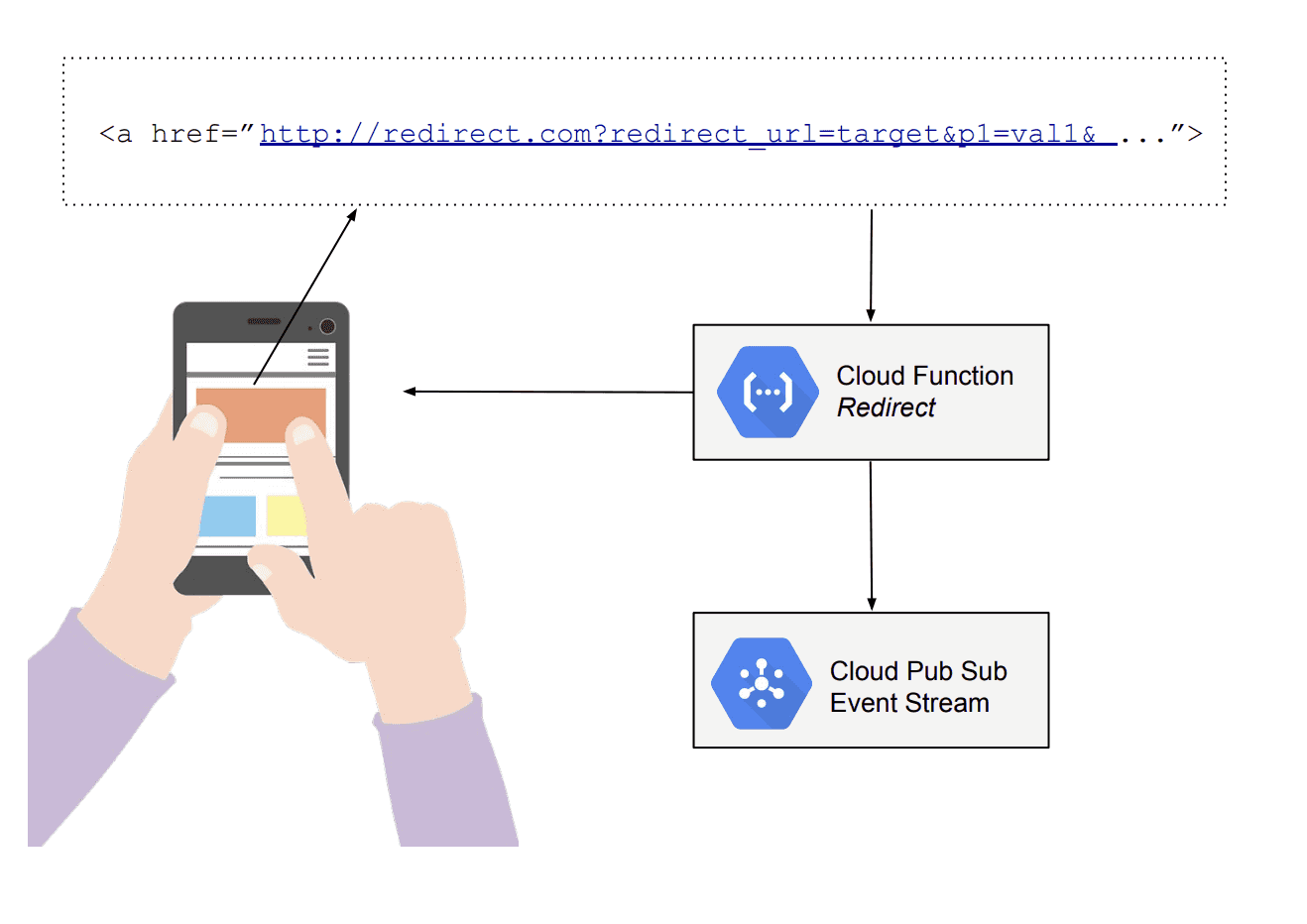

Conversion though is happening every time a user clicks on the ad to finally land on the page behind it. To collect such data we’ve enriched URL with user data in the same way as for impressions and changed the URL so instead going directly to the destination request (user) hops through one simple service called redirect function. This short logic has exactly two goals, record and send an event to message queue service (Pub/Sub again) and make a redirect, so the user lands on the page where the ad was supposed to take him.

Clicks collection

Clicks collection

There are multiple storage offerings in Google Cloud’s portfolio, though only one clear winner that matched our use case perfectly — BigQuery. From end-user perspective it has a look & feel of good old SQL database and allows you to tackle big-data scale analysis (terabytes) without additional efforts and unprecedented performance.

BigQuery gives you a hand full of tools, on top of SQL standard 2011 there is support of advanced structures, geo queries, UDF’s and even recently introduced Machine Learning capabilities, plus — it can be easily integrated with most of popular Business Intelligence (BI) tools for data visualisation or used programmatically via either provided client libraries or a JDBC driver.

To land incoming streams of events (impressions and conversions), we’ve used a solution called Data Flow, which is a runtime for Apache Beam — framework for parallel data processing. The simplest solution would be to stream event as is without any modifications and Google Cloud provides a template job for that. Although the simplest, it was not an option for our project since in the long run would just transfer the complexity and cost of data preparation to data analytics, and what’s even worse, could end up with data misinterpretations and inconsistent insights.

{

"httpRequest": {

"referer": "http://referer.test.com/",

"remoteIp": "999.999.999.990",

"requestMethod": "GET",

"requestSize": "935",

"requestUrl": "http://test.com/pixel.png?cid=88872&base=Campaign_Name&la=92.23401&lo=23.01517&b=test_app_domain3d&sz=728x90&na=90&w=728&q=Image&mu=banner-url-728x90&a=1190277&ga=testUserId",

"responseSize": "1293",

"status": 200,

"userAgent": "#####"

},

"insertId": "qx393sg1ql7la9",

"jsonPayload": {

"@type": "type.googleapis.com/google.cloud.loadbalancing.type.LoadBalancerLogEntry",

"statusDetails": "response_sent_by_backend"

},

"severity": "INFO"

"timestamp": "2019-03-12T07:59:59.801844665Z",

}

As seen in the raw event example above, most of interesting data regarding user was convoluted into request url. When supporting multiple DPS we couldn’t have a guarantee that all events use the same parameter naming and value formatting.

Instead of going with lazy attempt to land whatever we have at input, we’ve decided to create custom ingestion pipeline with all necessary preprocessing done before data reaches its final location. Here are some of the transformations we’ve included:

Extracting and unifying data that was previously put into request url parameters, not only to reduce time and cost of deriving insights hence making the analytics’ queries much simpler, but also to encapsulate business logic behind interpreting parameters from multiple DSPs in a single place. Such approach allows for unifying it across the company and making sure it remains consistent for all the insights produced within our platform .

Validating and unifying values and formats so the data is clean, with proper types and values and consistent meaning.

Structuring the data into intuitive, verbosely described structure to improve readability of queries and allowing an uninterrupted work without constantly peeking into documentation to understand the data points.

Improving the completeness of the data with additional sources and logic like resolving IDs to their text meanings or reverse geocoding.

Eventually, incoming data landed in BigQuery table. Documented, validated, complete and normalised with well described schema, ready to be used for analytics, ML or reporting via BI tool.

Worth to mention here as well is that due to the fact that Apache Beam handles streaming and batch processing in a unified way. This has enabled us to reuse the same business logic to load historical events as well, ensuring the consistence and the same level of data quality across the whole dataset.

DataFlow pipeline used for events ingestion was also a good place to perform classification trials. Each event coming through the system, apart from being sent to BigQuery, went also through segmentation process. Incoming data went through a bunch of programmatic rules to determine segment participation. Calculated set of segments was sent to CloudSQL database (random access) to create or update existing user record.

Such solution allowed to easily obtain groups of users based on a segments they fall into, e.g. “give me all car drivers in San Francisco bay area, that are interested in automotive ads (high conversion)”, as they seem like a good target for new car dealership announcement campaign. You could get that group from segments database with a single query.

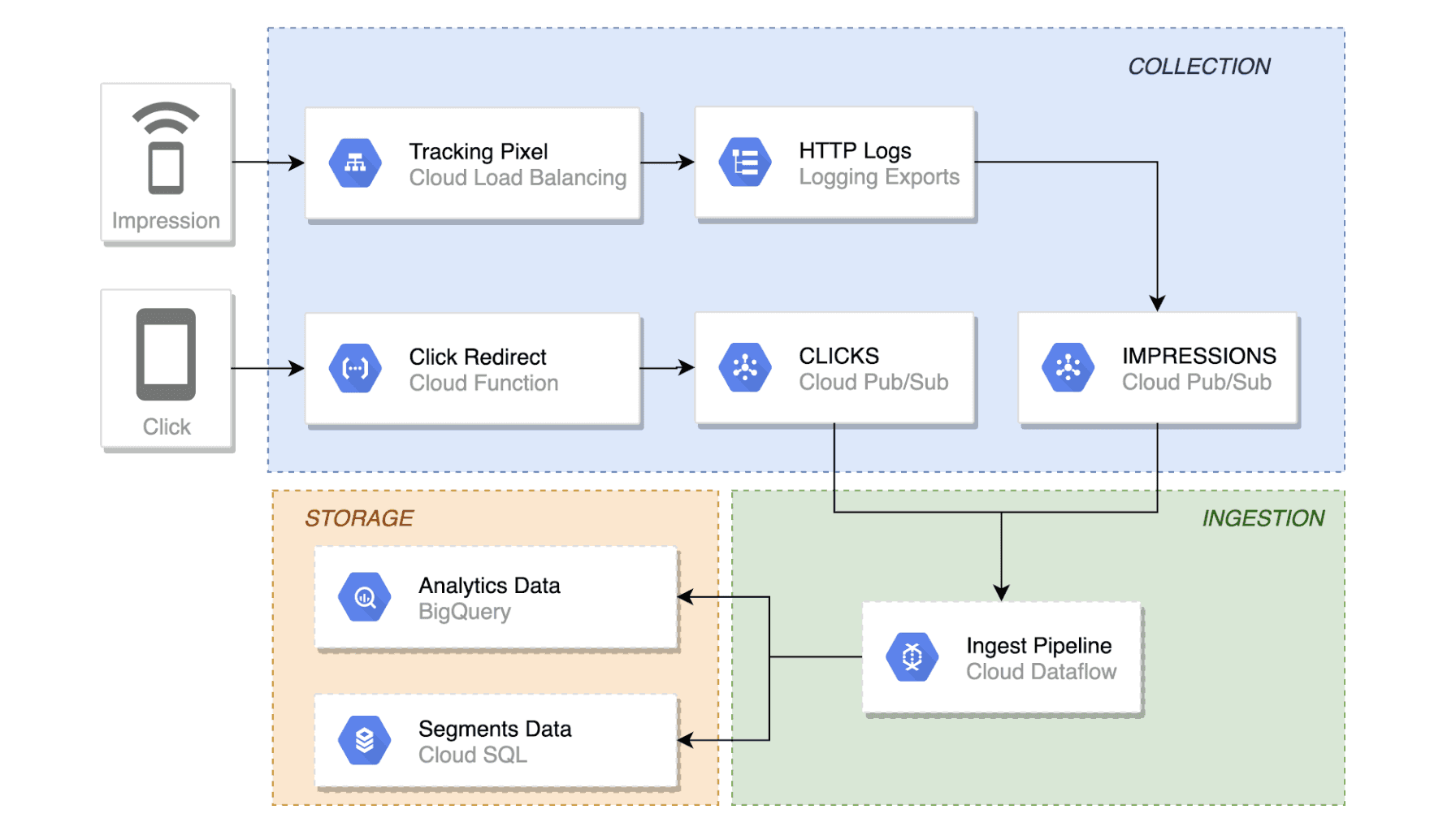

It seems like a lot of elements to cover you’re reading about it, but overall solution is pretty simple and straightforward. Just look at the diagram below:

Solution overview

Solution overview

One of the best things about relying on Google’s managed services was almost no operational overhead as all of them provided top notch security, and SLA covered resilience with built-in monitoring and alerting options.

All in all, we’ve finished the project ahead of planned delivery time and used the remaining resources to optimise and tune-up the expected outcome.

What’s also impressive is that the minimal setup required only substantial 24/7 live resources (subsistence cost = single database instance + single DataFlow worker), with the whole solution scaling up in minutes to handle billions of events a minute without any human intervention.

Flink complex event processing (CEP).... ....provides an amazing API for matching patterns within streams. It was introduced in 2016 with an…

Read moreThe client who needs Data Analytics Platform ING is a global bank with a European base, serving large corporations, multinationals and financial…

Read moreYou just finished the Apache Spark-based application. You ran so many times, you just know the app works exactly as expected: it loads the input…

Read moreIn today's digital age, data reigns supreme as the lifeblood of organizations across industries. From enabling informed decision-making to driving…

Read moreAbout In this White Paper, we described what is the Industrial Internet of Things and what profits you can get from Data Analytics with IIoT What you…

Read moreWelcome to the next instalment of the “Power of Big Data” series. The entire series aims to make readers aware of how much Big Data is needed and how…

Read moreTogether, we will select the best Big Data solutions for your organization and build a project that will have a real impact on your organization.

What did you find most impressive about GetInData?