CSVs and XLSXs files are one of the most common file formats used in business to store and analyze data. Unfortunately, such an approach is not scalable and it becomes more and more difficult to provide all team members access to one common file in which they can cooperate and share the results of their work with different teams.

Surely, there are available solutions to implement in the spreadsheet files in real-time, but it’s still difficult to share data with different teams, process it or decide about the target data format, especially when using autodetection data formatting.

Where should we start?

The first aspect is about preparing the landscape of all processed spreadsheets. We start with the most important thing: understanding the data, verifying which information is important for target users and therefore possibly reducing the amount of processed data by deleting unused data.

The second part is focused on understanding how to create new spreadsheets - can we automate that step? Can it be only done by users manually? How frequently is data uploaded? How can we verify if there are any changes in the data schema?

When we have defined the logistics and we know what the input and desired output will be, we can hop over to the next step to define how to process data, create some aggregations for example, save data in the target database or storage, clean data and how to deliver output to the target users.

It is necessary to mention that we must know if there are any tools used by different teams to analyze or visualize data further. If users utilise one solution, it might be worth implementing in our new process to simplify the users’ onboarding process.

The last step is about adding a monitoring layer. Who can take this action if there's a problem with source data and how should we notify the analysts? How can we check data quality? What should we do to avoid human error in the case of a manual process? We should implement metrics reporters to our application and queries to detect incorrect records or those with data that is too varied. We can create alerts and dashboards based on the findings.

The multistage process

Public cloud such as Google Cloud Platform helps companies to improve their data pipelines and move quickly from local Excel development to scalable tools. It makes work faster, more efficient and with no human errors or problems with data formatting.

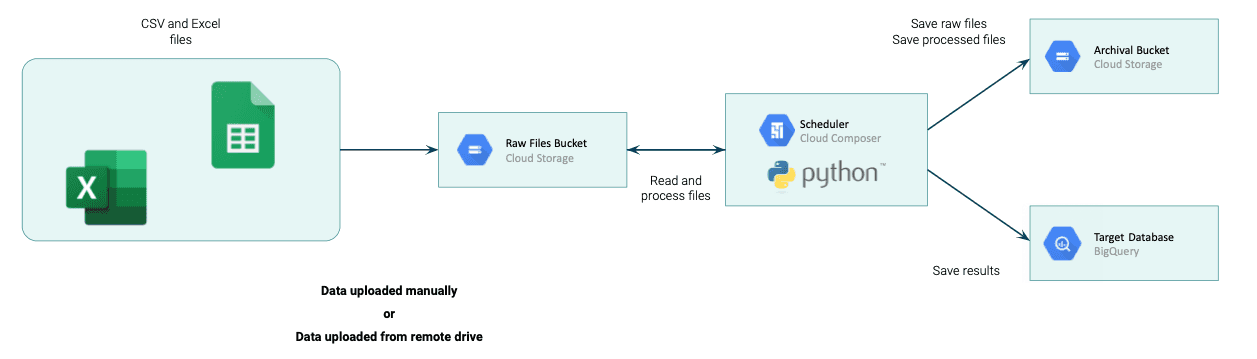

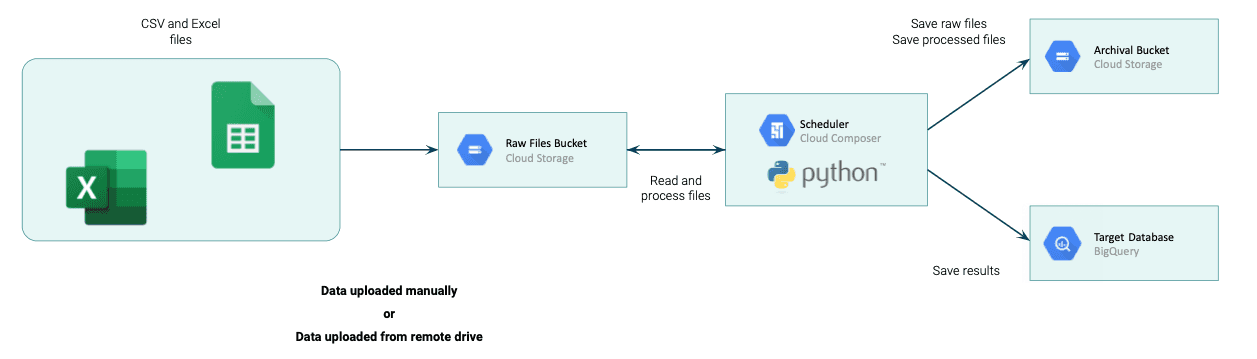

The first step is about data ingestion. The perfect place to store raw, unprocessed data is Google Cloud Storage. Users can upload data there or add a sync script between Cloud Storage and some remote drives. Here we start the journey with process alignment and data integration from multiple sources.

For data processing pipelines, we can go with multiple solutions. Due to the different use cases in each project, the best way is to create a custom Python script(s) to process data while the scripts themselves can be scheduled by tools like Google Cloud Composer (managed Apache Airflow), self-managed Apache Airflow, Google Cloud Tasks, Google Cloud Scheduler or even a mix of Cloud Pub/Sub with Cloud Functions.

In the example scenario, we use Composer with Python scripts executed on the Kubernetes pods of the Composer that is the most flexible solution and can easily be extended in the future.

As the final part of the CSVs and XLSXs processing platform, we need to ingest processed data somewhere. It depends on the exact use cases, the most common of which can be solved by inserting data into BigQuery which works perfectly as the data warehouse and can be used as the engine for Business Intelligence. The performance is great.

Last but not least, everything must be managed by the Infrastructure-as-a-Code. A mix of Terraform and CICD tools like GitHub Actions or GitLab Ci helps in making it happen fast and provides possibilities to easily manage infrastructure. If you want to read something more about terraform, check our blog post “Terraform your Cloud Infrastructure”.

We also need to mention the monitoring layer. It's powered by Cloud Monitoring, Cloud Logging and BigQueries tables in which we can store information about potential errors in the source data. It can be visualized in Data Studio or a similar tool, while alerts can be sent via email to the stakeholders who can then take action.

Automate work and simplify the processes with Google Cloud

Another benefit of this solution is that it's not expensive. It delivers High Availability and can easily be scaled up, depending on the company's needs and the complexity of the next tasks that must be implemented by the processing platform. Here's an example of how we can quickly move from local Excel development to the automated cloud environment to simplify data management and start data-driven development in the cloud.

Would you like to chance your spreadsheet files to automated data pipelines with Google Cloud? Let’s discuss about this, contact us!